blip-2

Maintainer: andreasjansson

21.4K

| Property | Value |

|---|---|

| Model Link | View on Replicate |

| API Spec | View on Replicate |

| Github Link | View on Github |

| Paper Link | View on Arxiv |

Get summaries of the top AI models delivered straight to your inbox:

Model overview

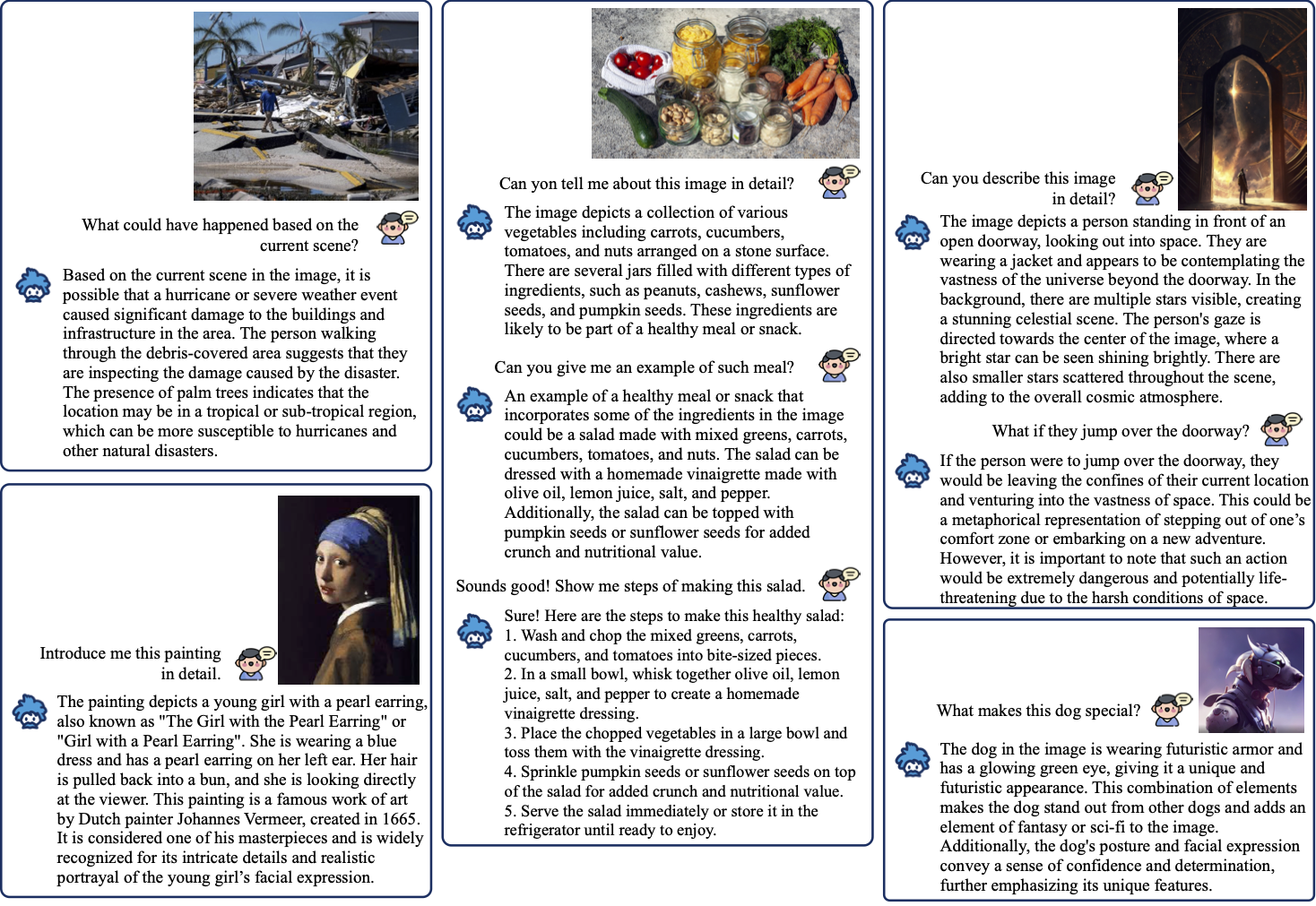

blip-2 is a visual question answering model developed by Salesforce's LAVIS team. It is a lightweight, cog-based model that can answer questions about images or generate captions. blip-2 builds upon the capabilities of the original BLIP model, offering improvements in speed and accuracy. Compared to similar models like bunny-phi-2-siglip, blip-2 is focused specifically on visual question answering, while models like bunny-phi-2-siglip offer a broader set of multimodal capabilities.

Model inputs and outputs

blip-2 takes an image, an optional question, and optional context as inputs. It can either generate an answer to the question or produce a caption for the image. The model's outputs are a string containing the response.

Inputs

- Image: The input image to query or caption

- Caption: A boolean flag to indicate if you want to generate image captions instead of answering a question

- Context: Optional previous questions and answers to provide context for the current question

- Question: The question to ask about the image

- Temperature: The temperature parameter for nucleus sampling

- Use Nucleus Sampling: A boolean flag to toggle the use of nucleus sampling

Outputs

- Output: The generated answer or caption

Capabilities

blip-2 is capable of answering a wide range of questions about images, from identifying objects and describing the contents of an image to answering more complex, reasoning-based questions. It can also generate natural language captions for images. The model's performance is on par with or exceeds that of similar visual question answering models.

What can I use it for?

blip-2 can be a valuable tool for building applications that require image understanding and question-answering capabilities, such as virtual assistants, image-based search engines, or educational tools. Its lightweight, cog-based architecture makes it easy to integrate into a variety of projects. Developers could use blip-2 to add visual question-answering features to their applications, allowing users to interact with images in more natural and intuitive ways.

Things to try

One interesting application of blip-2 could be to use it in a conversational agent that can discuss and explain images with users. By leveraging the model's ability to answer questions and provide context, the agent could engage in natural, back-and-forth dialogues about visual content. Developers could also explore using blip-2 to enhance image-based search and discovery tools, allowing users to find relevant images by asking questions about their contents.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

blip

81.8K

BLIP (Bootstrapping Language-Image Pre-training) is a vision-language model developed by Salesforce that can be used for a variety of tasks, including image captioning, visual question answering, and image-text retrieval. The model is pre-trained on a large dataset of image-text pairs and can be fine-tuned for specific tasks. Compared to similar models like blip-vqa-base, blip-image-captioning-large, and blip-image-captioning-base, BLIP is a more general-purpose model that can be used for a wider range of vision-language tasks. Model inputs and outputs BLIP takes in an image and either a caption or a question as input, and generates an output response. The model can be used for both conditional and unconditional image captioning, as well as open-ended visual question answering. Inputs Image**: An image to be processed Caption**: A caption for the image (for image-text matching tasks) Question**: A question about the image (for visual question answering tasks) Outputs Caption**: A generated caption for the input image Answer**: An answer to the input question about the image Capabilities BLIP is capable of generating high-quality captions for images and answering questions about the visual content of images. The model has been shown to achieve state-of-the-art results on a range of vision-language tasks, including image-text retrieval, image captioning, and visual question answering. What can I use it for? You can use BLIP for a variety of applications that involve processing and understanding visual and textual information, such as: Image captioning**: Generate descriptive captions for images, which can be useful for accessibility, image search, and content moderation. Visual question answering**: Answer questions about the content of images, which can be useful for building interactive interfaces and automating customer support. Image-text retrieval**: Find relevant images based on textual queries, or find relevant text based on visual input, which can be useful for building image search engines and content recommendation systems. Things to try One interesting aspect of BLIP is its ability to perform zero-shot video-text retrieval, where the model can directly transfer its understanding of vision-language relationships to the video domain without any additional training. This suggests that the model has learned rich and generalizable representations of visual and textual information that can be applied to a variety of tasks and modalities. Another interesting capability of BLIP is its use of a "bootstrap" approach to pre-training, where the model first generates synthetic captions for web-scraped image-text pairs and then filters out the noisy captions. This allows the model to effectively utilize large-scale web data, which is a common source of supervision for vision-language models, while mitigating the impact of noisy or irrelevant image-text pairs.

Updated Invalid Date

clip-features

55.8K

The clip-features model, developed by Replicate creator andreasjansson, is a Cog model that outputs CLIP features for text and images. This model builds on the powerful CLIP architecture, which was developed by researchers at OpenAI to learn about robustness in computer vision tasks and test the ability of models to generalize to arbitrary image classification in a zero-shot manner. Similar models like blip-2 and clip-embeddings also leverage CLIP capabilities for tasks like answering questions about images and generating text and image embeddings. Model inputs and outputs The clip-features model takes a set of newline-separated inputs, which can either be strings of text or image URIs starting with http[s]://. The model then outputs an array of named embeddings, where each embedding corresponds to one of the input entries. Inputs Inputs**: Newline-separated inputs, which can be strings of text or image URIs starting with http[s]://. Outputs Output**: An array of named embeddings, where each embedding corresponds to one of the input entries. Capabilities The clip-features model can be used to generate CLIP features for text and images, which can be useful for a variety of downstream tasks like image classification, retrieval, and visual question answering. By leveraging the powerful CLIP architecture, this model can enable researchers and developers to explore zero-shot and few-shot learning approaches for their computer vision applications. What can I use it for? The clip-features model can be used in a variety of applications that involve understanding the relationship between images and text. For example, you could use it to: Perform image-text similarity search, where you can find the most relevant images for a given text query, or vice versa. Implement zero-shot image classification, where you can classify images into categories without any labeled training data. Develop multimodal applications that combine vision and language, such as visual question answering or image captioning. Things to try One interesting aspect of the clip-features model is its ability to generate embeddings that capture the semantic relationship between text and images. You could try using these embeddings to explore the similarities and differences between various text and image pairs, or to build applications that leverage this cross-modal understanding. For example, you could calculate the cosine similarity between the embeddings of different text inputs and the embedding of a given image, as demonstrated in the provided example code. This could be useful for tasks like image-text retrieval or for understanding the model's perception of the relationship between visual and textual concepts.

Updated Invalid Date

stable-diffusion

107.9K

Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input. Developed by Stability AI, it is an impressive AI model that can create stunning visuals from simple text prompts. The model has several versions, with each newer version being trained for longer and producing higher-quality images than the previous ones. The main advantage of Stable Diffusion is its ability to generate highly detailed and realistic images from a wide range of textual descriptions. This makes it a powerful tool for creative applications, allowing users to visualize their ideas and concepts in a photorealistic way. The model has been trained on a large and diverse dataset, enabling it to handle a broad spectrum of subjects and styles. Model inputs and outputs Inputs Prompt**: The text prompt that describes the desired image. This can be a simple description or a more detailed, creative prompt. Seed**: An optional random seed value to control the randomness of the image generation process. Width and Height**: The desired dimensions of the generated image, which must be multiples of 64. Scheduler**: The algorithm used to generate the image, with options like DPMSolverMultistep. Num Outputs**: The number of images to generate (up to 4). Guidance Scale**: The scale for classifier-free guidance, which controls the trade-off between image quality and faithfulness to the input prompt. Negative Prompt**: Text that specifies things the model should avoid including in the generated image. Num Inference Steps**: The number of denoising steps to perform during the image generation process. Outputs Array of image URLs**: The generated images are returned as an array of URLs pointing to the created images. Capabilities Stable Diffusion is capable of generating a wide variety of photorealistic images from text prompts. It can create images of people, animals, landscapes, architecture, and more, with a high level of detail and accuracy. The model is particularly skilled at rendering complex scenes and capturing the essence of the input prompt. One of the key strengths of Stable Diffusion is its ability to handle diverse prompts, from simple descriptions to more creative and imaginative ideas. The model can generate images of fantastical creatures, surreal landscapes, and even abstract concepts with impressive results. What can I use it for? Stable Diffusion can be used for a variety of creative applications, such as: Visualizing ideas and concepts for art, design, or storytelling Generating images for use in marketing, advertising, or social media Aiding in the development of games, movies, or other visual media Exploring and experimenting with new ideas and artistic styles The model's versatility and high-quality output make it a valuable tool for anyone looking to bring their ideas to life through visual art. By combining the power of AI with human creativity, Stable Diffusion opens up new possibilities for visual expression and innovation. Things to try One interesting aspect of Stable Diffusion is its ability to generate images with a high level of detail and realism. Users can experiment with prompts that combine specific elements, such as "a steam-powered robot exploring a lush, alien jungle," to see how the model handles complex and imaginative scenes. Additionally, the model's support for different image sizes and resolutions allows users to explore the limits of its capabilities. By generating images at various scales, users can see how the model handles the level of detail and complexity required for different use cases, such as high-resolution artwork or smaller social media graphics. Overall, Stable Diffusion is a powerful and versatile AI model that offers endless possibilities for creative expression and exploration. By experimenting with different prompts, settings, and output formats, users can unlock the full potential of this cutting-edge text-to-image technology.

Updated Invalid Date

instructblip

526

InstructBLIP is an image captioning model that leverages vision-language models with instruction tuning. It builds upon the BLIP model, which is a bootstrapping language-image pre-training approach. InstructBLIP aims to be a more general-purpose vision-language model by incorporating instruction tuning, which allows it to better understand and follow natural language instructions. This model can be contrasted with other multi-modal models like LLAVA-13B and Stable Diffusion, which have different focuses on visual instruction tuning and text-to-image generation respectively. Model inputs and outputs InstructBLIP takes an image as input and generates a text description of that image. The key inputs are the image path, a prompt to guide the caption, and various parameters to control the output length, sampling, and penalties. The model outputs a text string containing the generated caption. Inputs Image Path**: The path to the image to be captioned Prompt**: The natural language prompt to guide the caption generation Max Len**: The maximum length of the generated caption Min Len**: The minimum length of the generated caption Beam Size**: The number of candidate captions to consider Len Penalty**: A penalty factor applied to the length of the generated caption Repetition Penalty**: A penalty factor applied to repeated tokens in the generated caption Top P**: The top-p nucleus sampling parameter to control the randomness of the output Use Nucleus Sampling**: A boolean to enable or disable the use of nucleus sampling Outputs Output**: The generated text caption for the input image Capabilities InstructBLIP is capable of generating human-like image captions that are tailored to the provided prompt. It can understand and follow natural language instructions to produce captions that are relevant and contextual. The model has been trained on a large dataset of image-text pairs, giving it a broad knowledge base to draw from. What can I use it for? You can use InstructBLIP for a variety of applications that require generating textual descriptions of images, such as: Automating the captioning of images in a content management system or e-commerce platform Enhancing accessibility by providing alt-text descriptions for images Generating captions for social media posts or marketing materials Powering image-based search or retrieval systems The instruction tuning capabilities of InstructBLIP also make it well-suited for more specialized tasks, such as generating captions for medical images or providing detailed technical descriptions of engineering diagrams. Things to try One interesting aspect of InstructBLIP is its ability to generate captions that adhere to specific instructions or constraints. For example, you could try providing prompts that ask the model to describe the image from a particular perspective (e.g., "Describe the scene as if you were a young child looking at the image") or to focus on certain visual elements (e.g., "Describe the colors and textures in the image"). Experimenting with different prompts and parameters can help you uncover the model's versatility and discover new ways to leverage its capabilities.

Updated Invalid Date