consistent-character

Maintainer: fofr

709

| Property | Value |

|---|---|

| Run this model | Run on Replicate |

| API spec | View on Replicate |

| Github link | View on Github |

| Paper link | No paper link provided |

Create account to get full access

Model overview

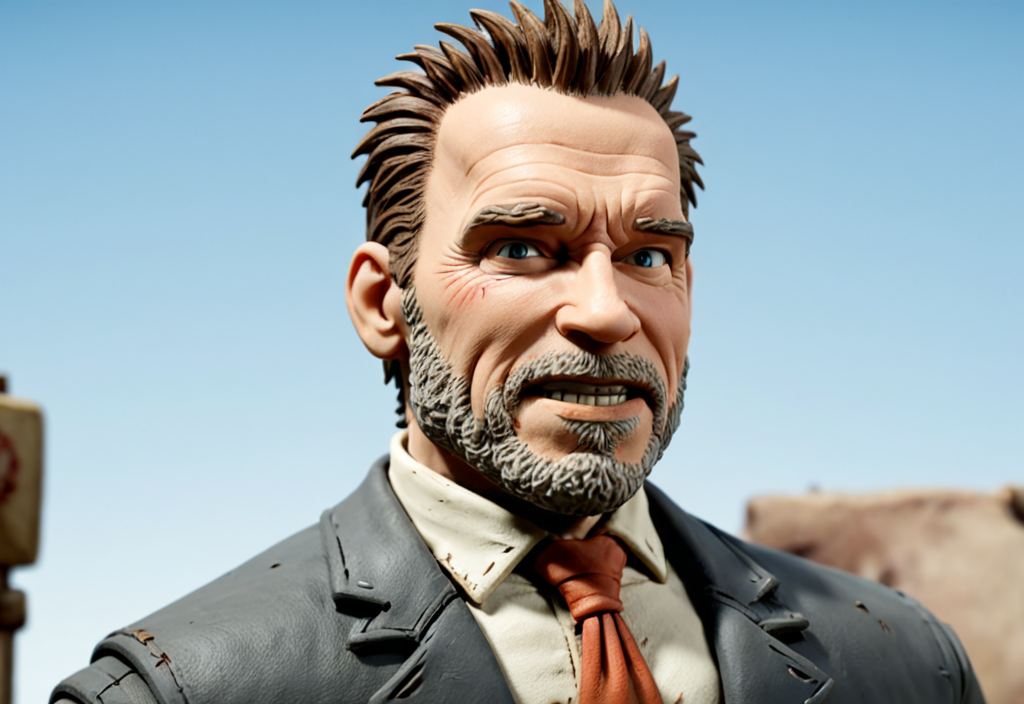

The consistent-character model, created by fofr, allows you to generate images of a given character in different poses. This model is similar to other character generation models like become-image, sdxl-simpsons-characters, pulid-base, and face-to-many. However, the consistent-character model focuses specifically on generating consistent images of a character in different poses, rather than transforming or generating characters in different styles.

Model inputs and outputs

The consistent-character model takes in a prompt, a subject image, and various other parameters to control the output. The outputs are a set of images of the character in different poses, generated based on the input.

Inputs

- Prompt: A textual description of the character, including details about their clothes and hairstyle, to help maintain consistency.

- Subject: An image of the person to be used as the basis for the character.

- Negative prompt: Things you do not want to see in the generated images.

- Number of outputs: The number of images to generate.

- Number of images per pose: The number of images to generate for each pose.

- Randomise poses: Whether to randomize the poses used.

- Output format and quality: The format and quality of the output images.

Outputs

- A set of images of the character in different poses, generated based on the input.

Capabilities

The consistent-character model can generate high-quality images of a character in different poses, maintaining a consistent appearance and style. This can be useful for creating character designs, illustrations, or even animation assets.

What can I use it for?

The consistent-character model can be used for a variety of applications, such as:

- Creating character designs and illustrations for games, animations, or other media.

- Generating character assets for use in 3D modeling or animation software.

- Experimenting with different poses and compositions for a character.

- Exploring character design ideas and iterating on them quickly.

Things to try

One interesting thing to try with the consistent-character model is to experiment with the prompt and subject image to see how the generated poses and character details change. You could also try adjusting the other input parameters, such as the number of outputs or the randomization of poses, to see how it affects the generated images.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

become-image

324

The become-image model, created by maintainer fofr, is an AI-powered tool that allows you to adapt any picture of a face into another image. This model is similar to other face transformation models like face-to-many, which can turn a face into various styles like 3D, emoji, or pixel art, as well as gfpgan, a practical face restoration algorithm for old photos or AI-generated faces. Model inputs and outputs The become-image model takes in several inputs, including an image of a person, a prompt describing the desired output, a negative prompt to exclude certain elements, and various parameters to control the strength and style of the transformation. The model then generates one or more images that depict the person in the desired style. Inputs Image**: An image of a person to be converted Prompt**: A description of the desired output image Negative Prompt**: Things you do not want in the image Number of Images**: The number of images to generate Denoising Strength**: How much of the original image to keep Instant ID Strength**: The strength of the InstantID Image to Become Noise**: The amount of noise to add to the style image Control Depth Strength**: The strength of the depth controlnet Disable Safety Checker**: Whether to disable the safety checker for generated images Outputs An array of generated images in the desired style Capabilities The become-image model can adapt any picture of a face into a wide variety of styles, from realistic to fantastical. This can be useful for creative projects, generating unique profile pictures, or even producing concept art for games or films. What can I use it for? With the become-image model, you can transform portraits into various artistic styles, such as anime, cartoon, or even psychedelic interpretations. This could be used to create unique profile pictures, avatars, or even illustrations for a variety of applications, from social media to marketing materials. Additionally, the model could be used to explore different creative directions for character design in games, movies, or other media. Things to try One interesting aspect of the become-image model is the ability to experiment with the various input parameters, such as the prompt, negative prompt, and denoising strength. By adjusting these settings, you can create a wide range of unique and unexpected results, from subtle refinements to the original image to completely surreal and fantastical transformations. Additionally, you can try combining the become-image model with other AI tools, such as those for text-to-image generation or image editing, to further explore the creative possibilities.

Updated Invalid Date

face-to-many

12.4K

The face-to-many model is a versatile AI tool that allows you to turn any face into a variety of artistic styles, such as 3D, emoji, pixel art, video game, claymation, or toy. Developed by fofr, this model is part of a larger collection of creative AI tools from the Replicate platform. Similar models include sticker-maker for generating stickers with transparent backgrounds, real-esrgan for high-quality image upscaling, and instant-id for creating realistic images of people. Model inputs and outputs The face-to-many model takes in an image of a person's face and a target style, allowing you to transform the face into a range of artistic representations. The model outputs an array of generated images in the selected style. Inputs Image**: An image of a person's face to be transformed Style**: The desired artistic style to apply, such as 3D, emoji, pixel art, video game, claymation, or toy Prompt**: A text description to guide the image generation (default is "a person") Negative Prompt**: Text describing elements you don't want in the image Prompt Strength**: The strength of the prompt, with higher numbers leading to a stronger influence Denoising Strength**: How much of the original image to keep, with 1 being a complete destruction and 0 being the original Instant ID Strength**: The strength of the InstantID model used for facial recognition Control Depth Strength**: The strength of the depth controlnet, affecting how much it influences the output Seed**: A fixed random seed for reproducibility Custom LoRA URL**: An optional URL to a custom LoRA (Learned Residual Adapter) model LoRA Scale**: The strength of the custom LoRA model Outputs An array of generated images in the selected artistic style Capabilities The face-to-many model excels at transforming faces into a wide range of artistic styles, from the detailed 3D rendering to the whimsical pixel art or claymation. The model's ability to capture the essence of the original face while applying these unique styles makes it a powerful tool for creative projects, digital art, and even product design. What can I use it for? With the face-to-many model, you can create unique and eye-catching visuals for a variety of applications, such as: Generating custom avatars or character designs for video games, apps, or social media Producing stylized portraits or profile pictures with a distinctive flair Designing fun and engaging stickers, emojis, or other digital assets Prototyping physical products like toys, figurines, or collectibles Exploring creative ideas and experimenting with different artistic interpretations of a face Things to try The face-to-many model offers a wide range of possibilities for creative experimentation. Try combining different styles, adjusting the input parameters, or using custom LoRA models to see how the output can be further tailored to your specific needs. Explore the limits of the model's capabilities and let your imagination run wild!

Updated Invalid Date

style-transfer

248

The style-transfer model allows you to transfer the style of one image to another. This can be useful for creating artistic and visually interesting images by blending the content of one image with the style of another. The model is similar to other image manipulation models like become-image and image-merger, which can be used to adapt or combine images in different ways. Model inputs and outputs The style-transfer model takes in a content image and a style image, and generates a new image that combines the content of the first image with the style of the second. Users can also provide additional inputs like a prompt, negative prompt, and various parameters to control the output. Inputs Style Image**: An image to copy the style from Content Image**: An image to copy the content from Prompt**: A description of the desired output image Negative Prompt**: Things you do not want to see in the output image Width/Height**: The size of the output image Output Format/Quality**: The format and quality of the output image Number of Images**: The number of images to generate Structure Depth/Denoising Strength**: Controls for the depth and denoising of the output image Outputs Output Images**: One or more images generated by the model Capabilities The style-transfer model can be used to create unique and visually striking images by blending the content of one image with the style of another. It can be used to transform photographs into paintings, cartoons, or other artistic styles, or to create surreal and imaginative compositions. What can I use it for? The style-transfer model could be used for a variety of creative projects, such as generating album covers, book illustrations, or promotional materials. It could also be used to create unique artwork for personal use or to sell on platforms like Etsy or DeviantArt. Additionally, the model could be incorporated into web applications or mobile apps that allow users to experiment with different artistic styles. Things to try One interesting thing to try with the style-transfer model is to experiment with different combinations of content and style images. For example, you could take a photograph of a landscape and blend it with the style of a Van Gogh painting, or take a portrait and blend it with the style of a comic book. The model allows for a lot of creative exploration and experimentation.

Updated Invalid Date

pulid-base

107

The pulid-base model is a face generation AI developed by fofr at Replicate. It uses SDXL fine-tuned checkpoints to generate images from a face image input. This model can be particularly useful for tasks like photo editing, avatar creation, or artistic exploration. Compared to similar models like stable-diffusion, pulid-base is specifically focused on face generation, while pulid is a more general ID customization model. The sdxl-deep-down model from the same creator is also fine-tuned on underwater imagery, making it suitable for different use cases. Model inputs and outputs The pulid-base model takes a face image as the primary input, along with a text prompt, seed, size, and various other options to control the style and output format. It then generates one or more images based on the provided inputs. Inputs Face Image**: The face image to use for the generation Prompt**: The text prompt to guide the image generation Seed**: Set a seed for reproducibility (random by default) Width/Height**: The size of the output image Face Style**: The desired style for the generated face Output Format**: The file format for the output images Output Quality**: The quality level for the output images Negative Prompt**: Text to exclude from the generated image Checkpoint Model**: The model checkpoint to use for generation Outputs Output Images**: One or more generated images based on the provided inputs Capabilities The pulid-base model can generate photo-realistic face images from a combination of a face image and a text prompt. It can be used to create unique, personalized images by blending the input face with different styles and scenarios described in the prompt. The model is particularly adept at maintaining the identity and features of the input face while generating diverse and visually compelling output images. What can I use it for? The pulid-base model can be a powerful tool for a variety of applications, such as: Avatar and character creation**: Generate unique, custom avatars or character designs for games, social media, or other digital experiences. Face editing and enhancement**: Enhance or modify existing face images, such as by changing the expression, style, or environment. Digital art and illustration**: Combine face images with imaginative prompts to create surreal, dreamlike, or stylized artworks. Prototyping and visualization**: Quickly generate face images to visualize concepts, ideas, or designs involving human subjects. By leveraging the face-focused capabilities of the pulid-base model, you can create a wide range of personalized and visually striking images to suit your needs. Things to try Experiment with different combinations of face images, prompts, and model parameters to see how the pulid-base model can transform a face in unexpected and creative ways. Try using the model to generate portraits with specific moods, emotions, or artistic styles. You can also explore blending the face with different environments, characters, or fantastical elements to produce unique and imaginative results.

Updated Invalid Date