all-in-one-music-structure-analyzer

Maintainer: sakemin

4

| Property | Value |

|---|---|

| Run this model | Run on Replicate |

| API spec | View on Replicate |

| Github link | View on Github |

| Paper link | View on Arxiv |

Create account to get full access

Model overview

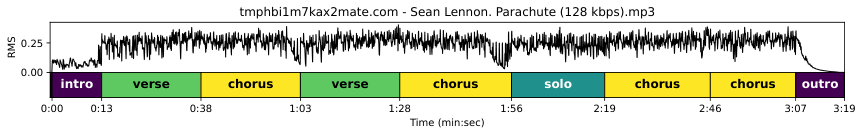

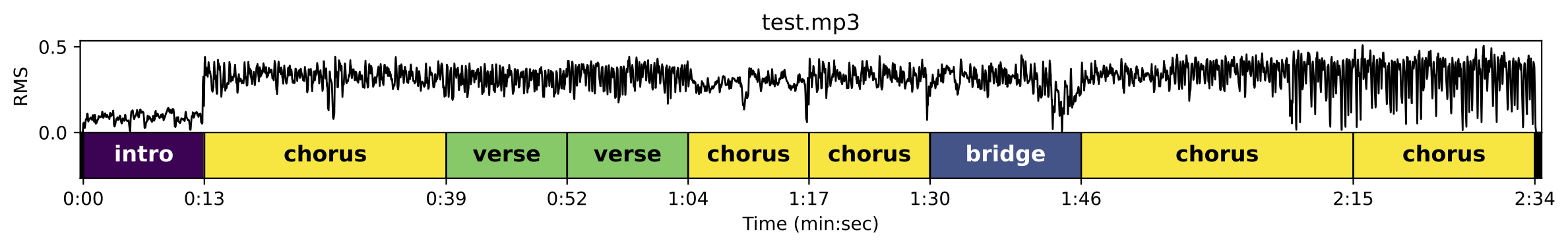

all-in-one-music-structure-analyzer is a Cog implementation of the "All-In-One Music Structure Analyzer" model developed by Taejun Kim. This model provides a comprehensive analysis of music structure, including tempo (BPM), beats, downbeats, functional segment boundaries, and functional segment labels (e.g., intro, verse, chorus, bridge, outro). The model is trained on the Harmonix Set dataset and uses neighborhood attentions on demixed audio to achieve high performance. This model is similar to other music analysis tools like musicgen-fine-tuner and musicgen-remixer created by the same maintainer, sakemin.

Model inputs and outputs

The all-in-one-music-structure-analyzer model takes an audio file as input and outputs a detailed analysis of the music structure. The analysis includes tempo (BPM), beat positions, downbeat positions, and functional segment boundaries and labels.

Inputs

- Music Input: An audio file to analyze.

Outputs

- Tempo (BPM): The estimated tempo of the input audio in beats per minute.

- Beats: The time positions of the detected beats in the audio.

- Downbeats: The time positions of the detected downbeats in the audio.

- Functional Segment Boundaries: The start and end times of the detected functional segments in the audio.

- Functional Segment Labels: The labels of the detected functional segments, such as intro, verse, chorus, bridge, and outro.

Capabilities

The all-in-one-music-structure-analyzer model can provide a comprehensive analysis of the musical structure of an audio file, including tempo, beats, downbeats, and functional segment information. This information can be useful for various applications, such as music information retrieval, automatic music transcription, and music production.

What can I use it for?

The all-in-one-music-structure-analyzer model can be used for a variety of music-related applications, such as:

- Music analysis and understanding: The detailed analysis of the music structure can be used to better understand the composition and arrangement of a musical piece.

- Music editing and production: The beat, downbeat, and segment information can be used to aid in tasks like tempo matching, time stretching, and sound editing.

- Automatic music transcription: The model's output can be used as a starting point for automatic music transcription systems.

- Music information retrieval: The structural information can be used to improve the performance of music search and recommendation systems.

Things to try

One interesting thing to try with the all-in-one-music-structure-analyzer model is to use the segment boundary and label information to create visualizations of the music structure. This can provide a quick and intuitive way to understand the overall composition of a musical piece.

Another interesting experiment would be to use the model's output as a starting point for further music analysis or processing tasks, such as chord detection, melody extraction, or automatic music summarization.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

all-in-one-audio

4

The all-in-one-audio model is an AI-powered music analysis and stem separation tool created by erickluis00. It combines the capabilities of the Demucs and MDX-Net models to provide a comprehensive audio processing solution. The model can analyze music structure, separate audio into individual stems (such as vocals, drums, and bass), and generate sonifications and visualizations of the audio data. It is similar to other audio separation models like demucs and spleeter, but offers a more all-in-one approach. Model inputs and outputs The all-in-one-audio model takes a music input file and several optional parameters to control the analysis and separation process. The outputs include the separated audio stems, as well as sonifications and visualizations of the audio data. Inputs music_input**: An audio file to be analyzed and processed. sonify**: A boolean flag to save sonifications of the analysis results. visualize**: A boolean flag to save visualizations of the analysis results. audioSeparator**: A boolean flag to enable audio separation using the MDX-net model. include_embeddings**: A boolean flag to include audio embeddings in the analysis results. include_activations**: A boolean flag to include activations in the analysis results. audioSeparatorModel**: The name of the pre-trained model to use for audio separation. Outputs mdx_other**: An array of URIs for the separated "other" stems (such as instruments) using the MDX-net model. mdx_vocals**: A URI for the separated vocal stem using the MDX-net model. demucs_bass**: A URI for the separated bass stem using the Demucs model. demucs_drums**: A URI for the separated drum stem using the Demucs model. demucs_other**: A URI for the separated "other" stem using the Demucs model. demucs_piano**: A URI for the separated piano stem using the Demucs model. sonification**: A URI for the generated sonification of the analysis results. demucs_guitar**: A URI for the separated guitar stem using the Demucs model. demucs_vocals**: A URI for the separated vocal stem using the Demucs model. visualization**: A URI for the generated visualization of the analysis results. analyzer_result**: A URI for the overall analysis results. mdx_instrumental**: A URI for the separated instrumental stem using the MDX-net model. Capabilities The all-in-one-audio model can analyze the structure of music and separate the audio into individual stems, such as vocals, drums, and instruments. It uses the Demucs and MDX-Net models to achieve this, combining their strengths to provide a comprehensive audio processing solution. What can I use it for? The all-in-one-audio model can be used for a variety of music-related applications, such as audio editing, music production, and music analysis. It can be particularly useful for producers, musicians, and researchers who need to work with individual audio stems or analyze the structure of music. For example, you could use the model to separate the vocals from a song, create remixes or mashups, or study the relationships between different musical elements. Things to try Some interesting things to try with the all-in-one-audio model include: Experimenting with the different audio separation models (Demucs and MDX-Net) to see which one works best for your specific use case. Generating sonifications and visualizations of the audio data to gain new insights into the music. Combining the separated audio stems in creative ways to produce new musical compositions. Analyzing the structure of music to better understand the relationships between different musical elements.

Updated Invalid Date

musicgen-remixer

7

musicgen-remixer is a Cog implementation of the MusicGen Chord model, a modified version of Meta's MusicGen Melody model. It can generate music by remixing an input audio file into a different style based on a text prompt. This model is created by sakemin, who has also developed similar models like musicgen-fine-tuner and musicgen. Model inputs and outputs The musicgen-remixer model takes in an audio file and a text prompt describing the desired musical style. It then generates a remix of the input audio in the specified style. The model supports various configuration options, such as adjusting the sampling temperature, controlling the influence of the input, and selecting the output format. Inputs prompt: A text description of the desired musical style for the remix. music_input: An audio file to be remixed. Outputs The remixed audio file in the requested style. Capabilities The musicgen-remixer model can transform input audio into a variety of musical styles based on a text prompt. For example, you could input a rock song and a prompt like "bossa nova" to generate a bossa nova-style remix of the original track. What can I use it for? The musicgen-remixer model could be useful for musicians, producers, or creators who want to experiment with remixing and transforming existing audio content. It could be used to create new, unique musical compositions, add variety to playlists, or generate backing tracks for live performances. Things to try Try inputting different types of audio, from vocals to full-band recordings, and see how the model handles the transformation. Experiment with various prompts, from specific genres to more abstract descriptors, to see the range of styles the model can produce.

Updated Invalid Date

musicgen-stereo-chord

37

musicgen-stereo-chord is a Cog implementation of Meta's MusicGen Melody model, created by sakemin. It can generate music based on audio-based chord conditions or text-based chord conditions, with the key difference being that it is restricted to chord sequences and tempo. This contrasts with the original MusicGen model, which can generate music from a prompt or melody. Model inputs and outputs The musicgen-stereo-chord model takes a variety of inputs to condition the generated music, including a text-based prompt, chord progression, tempo, and time signature. It outputs a generated audio file in either WAV or MP3 format. Inputs Prompt**: A description of the music you want to generate. Text Chords**: A text-based chord progression condition, with each chord specified by a root note and optional chord type. BPM**: The tempo of the generated music, in beats per minute. Time Signature**: The time signature of the generated music, in the format "numerator/denominator". Audio Chords**: An optional audio file that will be used to condition the chord progression. Audio Start/End**: The start and end times within the audio file to use for chord conditioning. Duration**: The length of the generated audio, in seconds. Continuation**: Whether to continue the music from the provided audio file, or to generate new music based on the chord conditions. Multi-Band Diffusion**: Whether to use the Multi-Band Diffusion technique to decode the generated audio. Normalization Strategy**: The strategy to use for normalizing the output audio. Sampling Parameters**: Various parameters to control the sampling process, such as temperature, top-k, and top-p. Outputs Generated Audio**: The generated music in WAV or MP3 format. Capabilities musicgen-stereo-chord can generate coherent and musically plausible chord-based music, with the ability to condition on both text-based and audio-based chord progressions. It also supports features like continuation, where the generated music can build upon a provided audio file, and multi-band diffusion, which can improve the quality of the output audio. What can I use it for? The musicgen-stereo-chord model could be used for a variety of music-related applications, such as: Generating background music for videos, games, or other multimedia projects. Composing chord-based musical pieces for various genres, such as pop, rock, or electronic music. Experimenting with different chord progressions and tempos to inspire new musical ideas. Exploring the use of audio-based chord conditioning to create more authentic-sounding music. Things to try One interesting aspect of musicgen-stereo-chord is its ability to continue generating music from a provided audio file. This could be used to create seamless loops or extended musical compositions by iteratively generating new sections that flow naturally from the previous ones. Another intriguing feature is the multi-band diffusion technique, which can improve the overall quality of the generated audio. Experimenting with this setting and comparing the results to the standard decoding approach could yield interesting insights into the trade-offs between audio quality and generation time.

Updated Invalid Date

musicgen-chord

2

musicgen-chord is a modified version of Meta's MusicGen Melody model, created by sakemin. This model can generate music based on either audio-based chord conditions or text-based chord conditions. It is a specialized model that focuses on generating music restricted to specific chord sequences and tempos. The model is similar to other models in the MusicGen family, such as musicgen-stereo-chord, which generates music in stereo with chord and tempo restrictions, and musicgen-remixer, which can remix music into different styles using MusicGen Chord. Additionally, the musicgen-fine-tuner model allows users to fine-tune the MusicGen small, medium, and melody models, including the stereo versions. Model inputs and outputs musicgen-chord takes in a variety of inputs to control the generated music, including text-based chord conditions, audio-based chord conditions, tempo, time signature, and more. The model can output audio in either WAV or MP3 format. Inputs Prompt**: A description of the music you want to generate. Text Chords**: A text-based chord progression condition, where chords are specified using a specific format. BPM**: The desired tempo for the generated music. Time Signature**: The time signature for the generated music. Audio Chords**: An audio file that will condition the chord progression. Audio Start/End**: The start and end times in the audio file to use for chord conditioning. Duration**: The duration of the generated audio in seconds. Continuation**: Whether the generated music should continue from the provided audio chords. Multi-Band Diffusion**: Whether to use Multi-Band Diffusion for decoding the EnCodec tokens. Normalization Strategy**: The strategy for normalizing the output audio. Sampling Parameters**: Controls like top-k, top-p, temperature, and classifier-free guidance. Outputs Generated Audio**: The output audio file in either WAV or MP3 format. Capabilities musicgen-chord can generate music with specific chord progressions and tempos. This allows users to create music that fits within certain musical constraints, such as a particular genre or style. The model can also continue generating music based on an existing audio input, allowing for more seamless and coherent compositions. What can I use it for? The musicgen-chord model can be useful for a variety of music-related applications, such as: Music Composition**: Generate new musical compositions with specific chord progressions and tempos, suitable for various genres or styles. Film/Game Scoring**: Create background music for films, TV shows, or video games that fits the desired mood and musical characteristics. Music Remixing**: Rework existing music by generating new variations based on the original chord progressions and tempo. Music Education**: Use the model to create practice exercises or educational materials focused on chord progressions and music theory. Things to try Some interesting things to try with musicgen-chord include: Experiment with different text-based chord conditions to see how they impact the generated music. Explore using audio-based chord conditioning and compare the results to text-based conditioning. Try generating longer, more complex musical compositions by using the continuation feature. Adjust the various sampling parameters, such as temperature and classifier-free guidance, to see how they affect the creativity and diversity of the generated music.

Updated Invalid Date