hairclip

Maintainer: wty-ustc

275

| Property | Value |

|---|---|

| Run this model | Run on Replicate |

| API spec | View on Replicate |

| Github link | View on Github |

| Paper link | View on Arxiv |

Create account to get full access

Model overview

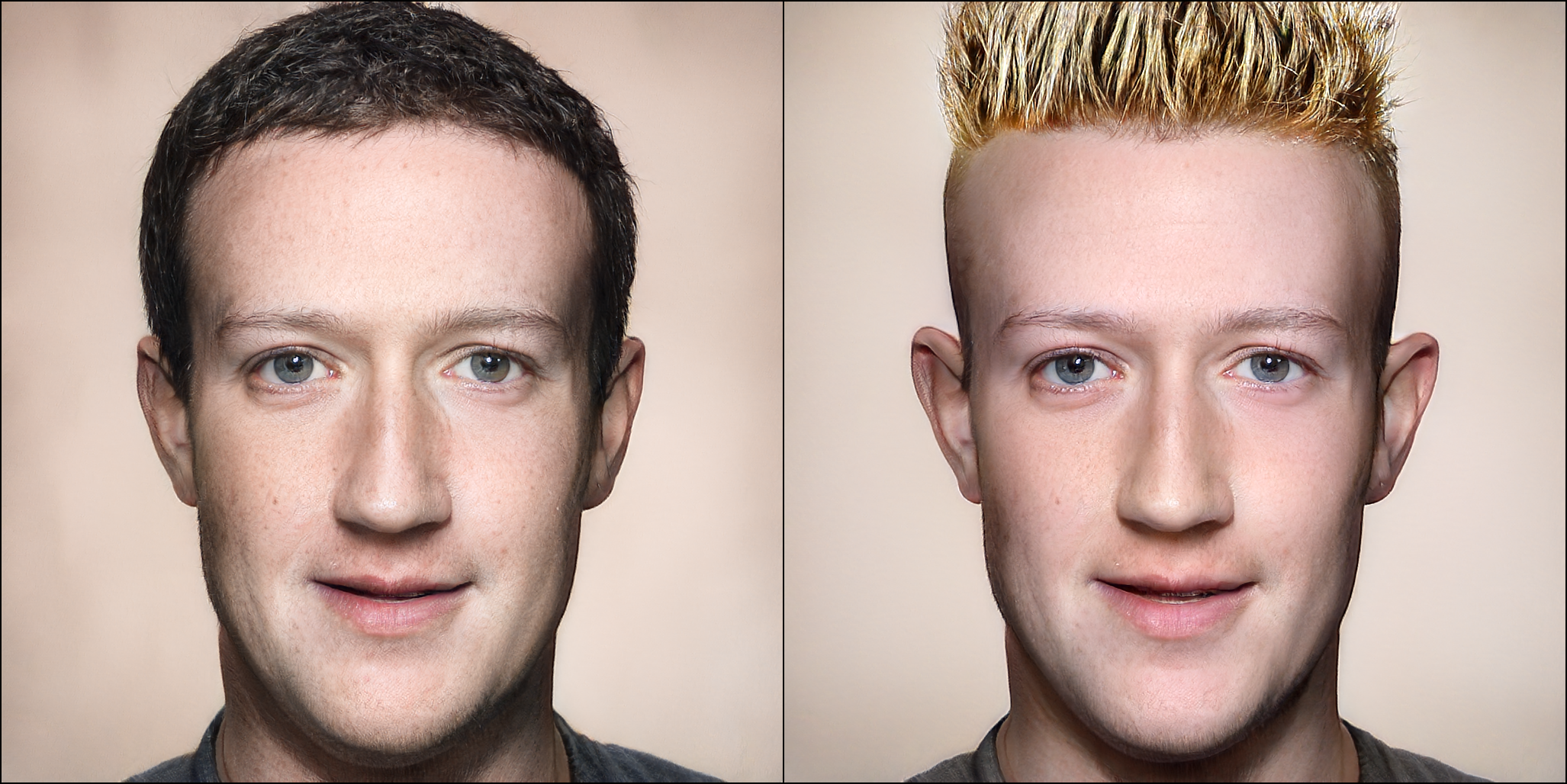

The hairclip model, developed by maintainer wty-ustc, is a novel AI model that can design hair by utilizing both text and reference image inputs. It supports editing hairstyle, hair color, or both, making it a versatile tool for hair styling and customization. The model builds upon previous work like StyleCLIP and HairCLIPv2, which have demonstrated the power of combining CLIP and StyleGAN for text-driven image manipulation.

Model inputs and outputs

The hairclip model takes two main inputs: an image and a text description. The image can be of any face, which the model will use as a reference for editing the hairstyle and/or color. The text description can specify the desired hairstyle, hair color, or both.

Inputs

- Image: The input image, which can be of any face. The model will use this as a reference for editing the hairstyle and/or color.

- Editing Type: Specify whether to edit the hairstyle, hair color, or both.

- Hairstyle Description: A text prompt describing the desired hairstyle.

- Color Description: A text prompt describing the desired hair color.

Outputs

- Edited Image: The output image with the hair edited according to the provided inputs.

Capabilities

The hairclip model is capable of seamlessly blending text-based and image-based hair editing. It can manipulate hairstyles, hair colors, or both, allowing users to customize a person's appearance in a natural and realistic way. The model leverages powerful underlying technologies like CLIP and StyleGAN to achieve high-quality and photorealistic results.

What can I use it for?

The hairclip model can be used for a variety of creative and practical applications. For example, you could use it to experiment with different hairstyles and colors on yourself or your friends, or to create unique and personalized avatars and characters for games, art projects, or social media. Businesses in the beauty and fashion industries could also leverage the model to offer virtual hair styling services or to generate product visualization imagery.

Things to try

One interesting thing to try with the hairclip model is to experiment with different combinations of text prompts and reference images. You could start with a simple hairstyle description and see how the model interprets it, then try adding a reference image to see how the two inputs are combined. You could also try more complex or creative text prompts to see how the model responds. Additionally, you could try editing both the hairstyle and color to see the full range of the model's capabilities.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

clipstyler

25

clipstyler is an AI model developed by Gihyun Kwon and Jong Chul Ye that enables image style transfer with a single text condition. It is similar to models like stable-diffusion, styleclip, and style-clip-draw that leverage text-to-image generation capabilities. However, clipstyler is unique in its ability to transfer the style of an image based on a single text prompt, rather than relying on a reference image. Model inputs and outputs The clipstyler model takes two inputs: an image and a text prompt. The image is used as the content that will have its style transferred, while the text prompt specifies the desired style. The model then outputs the stylized image, where the content of the input image has been transformed to match the requested style. Inputs Image**: The input image that will have its style transferred Text**: A text prompt describing the desired style to be applied to the input image Outputs Image**: The output image with the input content stylized according to the provided text prompt Capabilities clipstyler is capable of transferring the style of an image based on a single text prompt, without requiring a reference image. This allows for more flexibility and creativity in the style transfer process, as users can experiment with a wide range of styles by simply modifying the text prompt. The model leverages the CLIP text-image encoder to learn the relationship between textual style descriptions and visual styles, enabling it to produce high-quality stylized images. What can I use it for? The clipstyler model can be used for a variety of creative applications, such as: Artistic image generation**: Quickly generate stylized versions of your own images or photos, experimenting with different artistic styles and techniques. Concept visualization**: Bring your ideas to life by generating images that match a specific textual description, useful for designers, artists, and product developers. Content creation**: Enhance your digital content, such as blog posts, social media graphics, or marketing materials, by applying unique and custom styles to your images. Things to try One interesting aspect of clipstyler is its ability to produce diverse and unexpected results by experimenting with different text prompts. Try prompts that combine multiple styles or emotions, or explore abstract concepts like "surreal" or "futuristic" to see how the model interprets and translates these ideas into visual form. The variety of outcomes can spark new creative ideas and inspire you to push the boundaries of what's possible with text-driven style transfer.

Updated Invalid Date

style-your-hair

9

The style-your-hair model, developed by the Replicate creator cjwbw, is a pose-invariant hairstyle transfer model that allows users to seamlessly transfer hairstyles between different facial poses. Unlike previous approaches that assumed aligned target and source images, this model utilizes a latent optimization technique and a local-style-matching loss to preserve the detailed textures of the target hairstyle even under significant pose differences. The model builds upon recent advances in hair modeling and leverages the capabilities of Stable Diffusion, a powerful text-to-image generation model, to produce high-quality hairstyle transfers. Similar models created by cjwbw include herge-style, anything-v4.0, and stable-diffusion-v2-inpainting. Model inputs and outputs The style-your-hair model takes two images as input: a source image containing a face and a target image containing the desired hairstyle. The model then seamlessly transfers the target hairstyle onto the source face, preserving the detailed texture and appearance of the target hairstyle even under significant pose differences. Inputs Source Image**: The image containing the face onto which the hairstyle will be transferred. Target Image**: The image containing the desired hairstyle to be transferred. Outputs Transferred Hairstyle Image**: The output image with the target hairstyle applied to the source face. Capabilities The style-your-hair model excels at transferring hairstyles between images with significant pose differences, a task that has historically been challenging. By leveraging a latent optimization technique and a local-style-matching loss, the model is able to preserve the detailed textures and appearance of the target hairstyle, resulting in high-quality, natural-looking transfers. What can I use it for? The style-your-hair model can be used in a variety of applications, such as virtual hair styling, entertainment, and fashion. For example, users could experiment with different hairstyles on their own photos or create unique hairstyles for virtual avatars. Businesses in the beauty and fashion industries could also leverage the model to offer personalized hair styling services or incorporate hairstyle transfer features into their products. Things to try One interesting aspect of the style-your-hair model is its ability to preserve the local-style details of the target hairstyle, even under significant pose differences. Users could experiment with transferring hairstyles between images with varying facial poses and angles, and observe how the model maintains the intricate textures and structure of the target hairstyle. Additionally, users could try combining the style-your-hair model with other Replicate models, such as anything-v3.0 or portraitplus, to explore more creative and personalized hair styling possibilities.

Updated Invalid Date

t2i_cl

1

t2i_cl is a text-to-image synthesis model that uses contrastive learning to improve the quality and diversity of generated images. It is based on the AttnGAN and DM-GAN models, but with the addition of a contrastive learning component. This allows the model to better capture the semantics and visual features of the input text, resulting in more faithful and visually appealing image generation. The model was developed by huiyegit, a researcher focused on text-to-image synthesis. It is similar to other state-of-the-art text-to-image models like stable-diffusion, t2i-adapter, and tedigan, which also aim to generate high-quality images from textual descriptions. Model inputs and outputs t2i_cl takes a textual description as input and generates a corresponding image. The model is trained on datasets of text-image pairs, which allows it to learn the association between language and visual concepts. Inputs sentence**: a text description of the image to be generated Outputs file**: a URI pointing to the generated image text**: the input text description Capabilities The t2i_cl model is capable of generating photorealistic images from a wide range of textual descriptions, including descriptions of objects, scenes, and even abstract concepts. The contrastive learning component helps the model better understand the semantics of the input text, leading to more faithful and visually appealing image generation. What can I use it for? The t2i_cl model could be useful for a variety of applications, such as: Content creation**: Generating images to accompany text-based content, like blog posts, articles, or social media posts. Prototyping and visualization**: Quickly generating visual concepts based on textual descriptions for design, engineering, or other creative projects. Accessibility**: Generating images to help convey information to users who may have difficulty reading or processing text. Things to try With t2i_cl, you can experiment with generating images for a wide range of textual descriptions, from simple objects to complex scenes and abstract ideas. Try providing the model with detailed, evocative language and see how it responds. You can also explore the model's ability to generate diverse images for the same input text by running the generation process multiple times.

Updated Invalid Date

styleclip

1.3K

styleclip is a text-driven image manipulation model developed by Or Patashnik, Zongze Wu, Eli Shechtman, Daniel Cohen-Or, and Dani Lischinski, as described in their ICCV 2021 paper. The model leverages the generative power of the StyleGAN generator and the visual-language capabilities of CLIP to enable intuitive text-based manipulation of images. The styleclip model offers three main approaches for text-driven image manipulation: Latent Vector Optimization: This method uses a CLIP-based loss to directly modify the input latent vector in response to a user-provided text prompt. Latent Mapper: This model is trained to infer a text-guided latent manipulation step for a given input image, enabling faster and more stable text-based editing. Global Directions: This technique maps text prompts to input-agnostic directions in the StyleGAN's style space, allowing for interactive text-driven image manipulation. Similar models like clip-features, stylemc, stable-diffusion, gfpgan, and upscaler also explore text-guided image generation and manipulation, but styleclip is unique in its use of CLIP and StyleGAN to enable intuitive, high-quality edits. Model inputs and outputs Inputs Input**: An input image to be manipulated Target**: A text description of the desired output image Neutral**: A text description of the input image Manipulation Strength**: A value controlling the degree of manipulation towards the target description Disentanglement Threshold**: A value controlling how specific the changes are to the target attribute Outputs Output**: The manipulated image generated based on the input and text prompts Capabilities The styleclip model is capable of generating highly realistic image edits based on natural language descriptions. For example, it can take an image of a person and modify their hairstyle, gender, expression, or other attributes by simply providing a target text prompt like "a face with a bowlcut" or "a smiling face". The model is able to make these changes while preserving the overall fidelity and identity of the original image. What can I use it for? The styleclip model can be used for a variety of creative and practical applications. Content creators and designers could leverage the model to quickly generate variations of existing images or produce new images based on text descriptions. Businesses could use it to create custom product visuals or personalized content. Researchers may find it useful for studying text-to-image generation and latent space manipulation. Things to try One interesting aspect of the styleclip model is its ability to perform "disentangled" edits, where the changes are specific to the target attribute described in the text prompt. By adjusting the disentanglement threshold, you can control how localized the edits are - a higher threshold leads to more targeted changes, while a lower threshold results in broader modifications across the image. Try experimenting with different text prompts and threshold values to see the range of edits the model can produce.

Updated Invalid Date