sdxl_overwatch

Maintainer: bemothhyde

1

| Property | Value |

|---|---|

| Run this model | Run on Replicate |

| API spec | View on Replicate |

| Github link | No Github link provided |

| Paper link | No paper link provided |

Create account to get full access

Model overview

The sdxl_overwatch model is a fine-tuned version of the SDXL (Stable Diffusion XL) model, trained on images of Overwatch heroes. This model is maintained by bemothhyde and is part of a collection of SDXL-based models, including sdxl-deep-down, sdxl-betterup, sdxl-black-light, and sdxl-toy-story-people.

Model inputs and outputs

The sdxl_overwatch model accepts a range of inputs, including an image, prompt, and various parameters to control the output. The outputs are a set of generated images based on the provided inputs.

Inputs

- Prompt: The text prompt that describes the desired image content.

- Negative Prompt: A text prompt that specifies elements to be excluded from the generated image.

- Image: An input image to be used for image-to-image or inpainting tasks.

- Mask: An input mask for the inpainting mode, where black areas will be preserved and white areas will be inpainted.

- Width and Height: The desired dimensions of the output image.

- Seed: A random seed value to control the image generation process.

- Scheduler: The scheduler algorithm to be used for image generation.

- Guidance Scale: The scale for classifier-free guidance, which influences the balance between the prompt and the input image.

- Num Inference Steps: The number of denoising steps to be used during image generation.

- Refine: The refine style to be applied to the generated image.

- Lora Scale: The LoRA (Low-Rank Adaptation) additive scale, which is only applicable to trained models.

- Num Outputs: The number of images to be generated.

- Refine Steps: The number of steps to refine the image, which is only applicable to the

base_image_refiner. - High Noise Frac: The fraction of noise to use, which is only applicable to the

expert_ensemble_refiner. - Apply Watermark: A boolean flag to enable or disable the application of a watermark to the generated images.

- Replicate Weights: The LoRA weights to be used, which can be specified instead of the default weights.

Outputs

- A set of generated images based on the provided inputs.

Capabilities

The sdxl_overwatch model can generate high-quality images of Overwatch heroes, leveraging its fine-tuning on this specific domain. It can produce detailed and visually striking images that capture the style and characteristics of the Overwatch universe.

What can I use it for?

The sdxl_overwatch model can be useful for creating Overwatch-themed content, such as fan art, illustrations, or even concept designs for new characters or game elements. It can be integrated into various creative projects or used to generate images for Overwatch-related marketing or promotional materials.

Things to try

One interesting aspect of the sdxl_overwatch model is its ability to generate unique and unexpected interpretations of Overwatch heroes. By experimenting with different prompts and parameters, you can explore the model's creative potential and discover novel and intriguing visual representations of these iconic characters.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

sdxl-lightning-4step

414.6K

sdxl-lightning-4step is a fast text-to-image model developed by ByteDance that can generate high-quality images in just 4 steps. It is similar to other fast diffusion models like AnimateDiff-Lightning and Instant-ID MultiControlNet, which also aim to speed up the image generation process. Unlike the original Stable Diffusion model, these fast models sacrifice some flexibility and control to achieve faster generation times. Model inputs and outputs The sdxl-lightning-4step model takes in a text prompt and various parameters to control the output image, such as the width, height, number of images, and guidance scale. The model can output up to 4 images at a time, with a recommended image size of 1024x1024 or 1280x1280 pixels. Inputs Prompt**: The text prompt describing the desired image Negative prompt**: A prompt that describes what the model should not generate Width**: The width of the output image Height**: The height of the output image Num outputs**: The number of images to generate (up to 4) Scheduler**: The algorithm used to sample the latent space Guidance scale**: The scale for classifier-free guidance, which controls the trade-off between fidelity to the prompt and sample diversity Num inference steps**: The number of denoising steps, with 4 recommended for best results Seed**: A random seed to control the output image Outputs Image(s)**: One or more images generated based on the input prompt and parameters Capabilities The sdxl-lightning-4step model is capable of generating a wide variety of images based on text prompts, from realistic scenes to imaginative and creative compositions. The model's 4-step generation process allows it to produce high-quality results quickly, making it suitable for applications that require fast image generation. What can I use it for? The sdxl-lightning-4step model could be useful for applications that need to generate images in real-time, such as video game asset generation, interactive storytelling, or augmented reality experiences. Businesses could also use the model to quickly generate product visualization, marketing imagery, or custom artwork based on client prompts. Creatives may find the model helpful for ideation, concept development, or rapid prototyping. Things to try One interesting thing to try with the sdxl-lightning-4step model is to experiment with the guidance scale parameter. By adjusting the guidance scale, you can control the balance between fidelity to the prompt and diversity of the output. Lower guidance scales may result in more unexpected and imaginative images, while higher scales will produce outputs that are closer to the specified prompt.

Updated Invalid Date

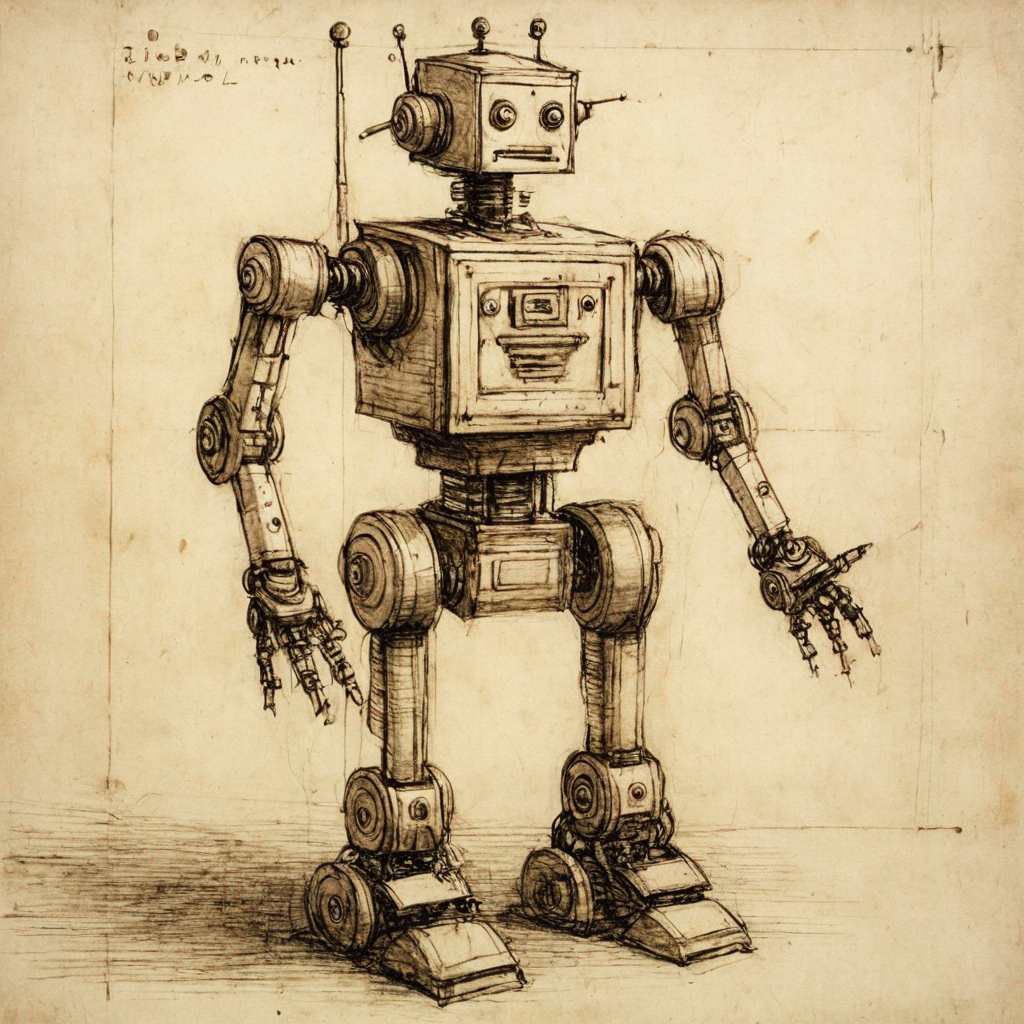

sdxl-davinci

5

sdxl-davinci is a fine-tuned version of the SDXL model, created by cbh123, that has been trained on Davinci drawings. This model is similar to other SDXL models like sdxl-allaprima, sdxl-shining, sdxl-money, sdxl-victorian-illustrations, and sdxl-2004, which have been fine-tuned on specific datasets to capture unique artistic styles and visual characteristics. Model inputs and outputs The sdxl-davinci model accepts a variety of inputs, including an image, prompt, and various parameters to control the output. The model can generate images based on the provided prompt, or perform tasks like image inpainting and refinement. The output is an array of one or more generated images. Inputs Prompt**: The text prompt that describes the desired image Image**: An input image to be used for tasks like img2img or inpainting Mask**: An input mask for the inpaint mode, where black areas will be preserved and white areas will be inpainted Width/Height**: The desired dimensions of the output image Seed**: A random seed value to control the image generation Refine**: The type of refinement to apply to the generated image Scheduler**: The scheduler algorithm to use for image generation LoRA Scale**: The scale to apply to any LoRA components Num Outputs**: The number of images to generate Refine Steps**: The number of refinement steps to apply Guidance Scale**: The scale for classifier-free guidance Apply Watermark**: Whether to apply a watermark to the generated image High Noise Frac**: The fraction of high noise to use for the expert_ensemble_refiner Negative Prompt**: An optional negative prompt to guide the image generation Outputs An array of one or more generated images Capabilities sdxl-davinci can generate a variety of artistic and illustrative images based on the provided prompt. The model's fine-tuning on Davinci drawings allows it to capture a unique and expressive style in the generated outputs. The model can also perform image inpainting and refinement tasks, allowing users to modify or enhance existing images. What can I use it for? The sdxl-davinci model can be used for a range of creative and artistic applications, such as generating illustrations, concept art, and digital paintings. Its ability to work with input images and masks makes it suitable for tasks like image editing, restoration, and enhancement. Additionally, the model's varied capabilities allow for experimentation and exploration of different artistic styles and compositions. Things to try One interesting aspect of the sdxl-davinci model is its ability to capture the expressive and dynamic qualities of Davinci's drawing style. Users can experiment with different prompts and input parameters to see how the model interprets and translates these artistic elements into unique and visually striking outputs. Additionally, the model's inpainting and refinement capabilities can be used to transform or enhance existing images, opening up opportunities for creative image manipulation and editing.

Updated Invalid Date

sdxl-bladerunner2049

1

The sdxl-bladerunner2049 is a specialized SDXL model trained on Blade Runner 2049 still frames. It is maintained by doriandarko. This model is similar to other SDXL models like sdxl-deep-down, sdxl, sdxl-black-light, sdxl, and sdxl_overwatch, which are fine-tuned on various datasets to specialize in different visual styles and themes. Model inputs and outputs The sdxl-bladerunner2049 model takes in a variety of inputs including an image, mask, prompt, and various configuration options. The outputs are an array of generated images. Inputs Prompt**: The input text prompt to guide the image generation Image**: An input image for img2img or inpaint mode Mask**: An input mask for inpaint mode, where black areas will be preserved and white areas will be inpainted Seed**: A random seed value, leave blank to randomize Width/Height**: The desired width and height of the output image Num Outputs**: The number of images to generate Guidance Scale**: The scale for classifier-free guidance Num Inference Steps**: The number of denoising steps to perform Prompt Strength**: The strength of the prompt when using img2img/inpaint Refine**: The refine style to use Scheduler**: The scheduler algorithm to use LoRA Scale**: The LoRA additive scale (only applicable on trained models) High Noise Frac**: The fraction of noise to use for the expert_ensemble_refiner Apply Watermark**: Whether to apply a watermark to the generated images Replicate Weights**: The LoRA weights to use (leave blank for default) Outputs Array of generated images**: The model outputs an array of generated image URLs Capabilities The sdxl-bladerunner2049 model is capable of generating high-quality images in the style of Blade Runner 2049. It can produce a variety of futuristic, dystopian scenes with distinct visual elements from the film. What can I use it for? You can use the sdxl-bladerunner2049 model to create Blade Runner-inspired artwork, concept art, or visual assets for projects related to science fiction, cyberpunk, or futuristic themes. The model's specialized training allows it to capture the unique aesthetic of the Blade Runner universe, making it a valuable tool for artists, designers, and filmmakers working in these genres. Things to try Some interesting things to try with the sdxl-bladerunner2049 model include experimenting with different input prompts to explore the range of visual styles it can produce, using the img2img and inpaint modes to modify existing Blade Runner imagery, and adjusting the various configuration options to fine-tune the output to your specific needs.

Updated Invalid Date

sdxl-allaprima

3

The sdxl-allaprima model, created by Dorian Darko, is a Stable Diffusion XL (SDXL) model trained on a blocky oil painting and still life dataset. This model shares similarities with other SDXL models like sdxl-inpainting, sdxl-bladerunner2049, and sdxl-deep-down, which have been fine-tuned on specific datasets to enhance their capabilities in areas like inpainting, sci-fi imagery, and underwater scenes. Model inputs and outputs The sdxl-allaprima model accepts a variety of inputs, including an input image, a prompt, and optional parameters like seed, width, height, and guidance scale. The output is an array of generated images that match the input prompt and image. Inputs Prompt**: The text prompt that describes the desired image. Image**: An input image that the model can use as a starting point for generation or inpainting. Mask**: A mask that specifies which areas of the input image should be preserved or inpainted. Seed**: A random seed value that can be used to generate reproducible outputs. Width/Height**: The desired dimensions of the output image. Guidance Scale**: A parameter that controls the influence of the text prompt on the generated image. Outputs Generated Images**: An array of one or more images that match the input prompt and image. Capabilities The sdxl-allaprima model is capable of generating high-quality, artistic images based on a text prompt. It can also be used for inpainting, where the model fills in missing or damaged areas of an input image. The model's training on a dataset of blocky oil paintings and still lifes gives it the ability to generate visually striking and unique images in this style. What can I use it for? The sdxl-allaprima model could be useful for a variety of applications, such as: Creating unique digital artwork and illustrations for personal or commercial use Generating concept art and visual references for creative projects Enhancing or repairing damaged or incomplete images through inpainting Experimenting with different artistic styles and techniques in a generative AI framework Things to try One interesting aspect of the sdxl-allaprima model is its ability to generate images with a distinctive blocky, oil painting-inspired style. Users could experiment with prompts that play to this strength, such as prompts that describe abstract, surreal, or impressionistic scenes. Additionally, the model's inpainting capabilities could be explored by providing it with partially complete images and seeing how it fills in the missing details.

Updated Invalid Date