internlm-xcomposer

Maintainer: cjwbw

164

.png)

| Property | Value |

|---|---|

| Run this model | Run on Replicate |

| API spec | View on Replicate |

| Github link | View on Github |

| Paper link | View on Arxiv |

Create account to get full access

Model overview

internlm-xcomposer is an advanced text-image comprehension and composition model developed by cjwbw, the creator of similar models like cogvlm, animagine-xl-3.1, videocrafter, and scalecrafter. It is based on the InternLM language model and can effortlessly generate coherent and contextual articles that seamlessly integrate images, providing a more engaging and immersive reading experience.

Model inputs and outputs

internlm-xcomposer is a powerful vision-language large model that can comprehend and compose text and images. It takes text and images as inputs, and can generate detailed text responses that describe the image content.

Inputs

- Text: Input text prompts or instructions

- Image: Input images to be described or combined with the text

Outputs

- Text: Detailed textual descriptions, captions, or compositions that integrate the input text and image

Capabilities

internlm-xcomposer has several appealing capabilities, including:

- Interleaved Text-Image Composition: The model can seamlessly generate long-form text that incorporates relevant images, providing a more engaging and immersive reading experience.

- Comprehension with Rich Multilingual Knowledge: The model is trained on extensive multi-modal multilingual concepts, resulting in a deep understanding of visual content across languages.

- Strong Performance:

internlm-xcomposerconsistently achieves state-of-the-art results across various benchmarks for vision-language large models, including MME Benchmark, MMBench, Seed-Bench, MMBench-CN, and CCBench.

What can I use it for?

internlm-xcomposer can be used for a variety of applications that require the integration of text and image content, such as:

- Generating illustrated articles or reports that blend text and visuals

- Enhancing educational materials with relevant images and explanations

- Improving product descriptions and marketing content with visuals

- Automating the creation of captions and annotations for images and videos

Things to try

With internlm-xcomposer, you can experiment with various tasks that combine text and image understanding, such as:

- Asking the model to describe the contents of an image in detail

- Providing a text prompt and asking the model to generate an image that matches the description

- Giving the model a text-based scenario and having it generate relevant images to accompany the story

- Exploring the model's multilingual capabilities by trying prompts in different languages

The versatility of internlm-xcomposer allows for creative and engaging applications that leverage the synergy between text and visuals.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

fastcomposer

34

The fastcomposer model, developed by researcher cjwbw, enables efficient, personalized, and high-quality multi-subject text-to-image generation without the need for subject-specific fine-tuning. This model builds on advances in diffusion models, leveraging subject embeddings extracted from reference images to augment the text conditioning. Unlike other methods that struggle with identity blending in multi-subject generation, fastcomposer proposes a cross-attention localization supervision technique to enforce the attention of reference subjects to the correct regions in the target images. This approach results in faster generation times, up to 2500x speedup compared to fine-tuning-based methods, while maintaining both identity preservation and editability. fastcomposer can be contrasted with similar models like scalecrafter, internlm-xcomposer, stable-diffusion, and supir, which also explore different aspects of efficient and personalized text-to-image generation. Model inputs and outputs The fastcomposer model takes in a text prompt, one or two reference images, and various hyperparameters to control the output. The text prompt specifies the desired content, style, and composition of the generated image, while the reference images provide subject-specific information to guide the generation process. Inputs Image1**: The first input image, which serves as a reference for one of the subjects in the generated image. Image2** (optional): The second input image, which provides a reference for another subject in the generated image. Prompt**: The text prompt that describes the desired content, style, and composition of the generated image. The prompt should include special tokens, like ``, to indicate which parts of the prompt should be augmented with the subject information from the reference images. Alpha**: A value between 0 and 1 that controls the balance between prompt consistency and identity preservation. A smaller alpha aligns the image more closely with the text prompt, while a larger alpha improves identity preservation. Num Steps**: The number of diffusion steps to perform during the image generation process. Guidance Scale**: The scale for the classifier-free guidance, which helps the model generate images that are more consistent with the text prompt. Num Images Per Prompt**: The number of output images to generate per input prompt. Seed**: An optional random seed to ensure reproducibility. Outputs Output**: An array of generated image URLs, with the number of images corresponding to the Num Images Per Prompt input. Capabilities The fastcomposer model excels at generating personalized, multi-subject images based on text prompts and reference images. It can seamlessly incorporate different subjects, styles, actions, and contexts into the generated images without the need for subject-specific fine-tuning. This flexibility and efficiency make fastcomposer a powerful tool for a variety of applications, from content creation and personalization to virtual photography and interactive storytelling. What can I use it for? The fastcomposer model can be used in a wide range of applications that require the generation of personalized, multi-subject images. Some potential use cases include: Content creation**: Generate custom images for social media, blogs, and other online content to enhance engagement and personalization. Virtual photography**: Create personalized, high-quality images for virtual events, gaming, and metaverse applications. Interactive storytelling**: Develop interactive narratives where the generated visuals adapt to the user's preferences and prompts. Product visualization**: Generate images of products with different models, backgrounds, and styles to aid in e-commerce and marketing efforts. Educational resources**: Create personalized learning materials, such as educational illustrations and diagrams, to enhance the learning experience. Things to try One key feature of the fastcomposer model is its ability to maintain both identity preservation and editability in subject-driven image generation. By leveraging delayed subject conditioning in the denoising step, the model can generate images with distinct subject features while still allowing for further editing and manipulation of the generated content. Another interesting aspect to explore is the model's cross-attention localization supervision, which helps to address the identity blending problem in multi-subject generation. By enforcing the attention of reference subjects to the correct regions in the target images, fastcomposer can produce high-quality, multi-subject images without compromising the individual identities. Additionally, the efficiency of fastcomposer is a significant advantage, as it can generate personalized images up to 2500x faster than fine-tuning-based methods. This speed boost opens up new possibilities for real-time or interactive applications that require rapid image generation.

Updated Invalid Date

cogvlm

586

CogVLM is a powerful open-source visual language model developed by the maintainer cjwbw. It comprises a vision transformer encoder, an MLP adapter, a pretrained large language model (GPT), and a visual expert module. CogVLM-17B has 10 billion vision parameters and 7 billion language parameters, and it achieves state-of-the-art performance on 10 classic cross-modal benchmarks, including NoCaps, Flicker30k captioning, RefCOCO, and more. It can also engage in conversational interactions about images. Similar models include segmind-vega, an open-source distilled Stable Diffusion model with 100% speedup, animagine-xl-3.1, an anime-themed text-to-image Stable Diffusion model, cog-a1111-ui, a collection of anime Stable Diffusion models, and videocrafter, a text-to-video and image-to-video generation and editing model. Model inputs and outputs CogVLM is a powerful visual language model that can accept both text and image inputs. It can generate detailed image descriptions, answer various types of visual questions, and even engage in multi-turn conversations about images. Inputs Image**: The input image that CogVLM will process and generate a response for. Query**: The text prompt or question that CogVLM will use to generate a response related to the input image. Outputs Text response**: The generated text response from CogVLM based on the input image and query. Capabilities CogVLM is capable of accurately describing images in detail with very few hallucinations. It can understand and answer various types of visual questions, and it has a visual grounding version that can ground the generated text to specific regions of the input image. CogVLM sometimes captures more detailed content than GPT-4V(ision). What can I use it for? With its powerful visual and language understanding capabilities, CogVLM can be used for a variety of applications, such as image captioning, visual question answering, image-based dialogue systems, and more. Developers and researchers can leverage CogVLM to build advanced multimodal AI systems that can effectively process and understand both visual and textual information. Things to try One interesting aspect of CogVLM is its ability to engage in multi-turn conversations about images. You can try providing a series of related queries about a single image and observe how the model responds and maintains context throughout the conversation. Additionally, you can experiment with different prompting strategies to see how CogVLM performs on various visual understanding tasks, such as detailed image description, visual reasoning, and visual grounding.

Updated Invalid Date

sdxl-lightning-4step

453.2K

sdxl-lightning-4step is a fast text-to-image model developed by ByteDance that can generate high-quality images in just 4 steps. It is similar to other fast diffusion models like AnimateDiff-Lightning and Instant-ID MultiControlNet, which also aim to speed up the image generation process. Unlike the original Stable Diffusion model, these fast models sacrifice some flexibility and control to achieve faster generation times. Model inputs and outputs The sdxl-lightning-4step model takes in a text prompt and various parameters to control the output image, such as the width, height, number of images, and guidance scale. The model can output up to 4 images at a time, with a recommended image size of 1024x1024 or 1280x1280 pixels. Inputs Prompt**: The text prompt describing the desired image Negative prompt**: A prompt that describes what the model should not generate Width**: The width of the output image Height**: The height of the output image Num outputs**: The number of images to generate (up to 4) Scheduler**: The algorithm used to sample the latent space Guidance scale**: The scale for classifier-free guidance, which controls the trade-off between fidelity to the prompt and sample diversity Num inference steps**: The number of denoising steps, with 4 recommended for best results Seed**: A random seed to control the output image Outputs Image(s)**: One or more images generated based on the input prompt and parameters Capabilities The sdxl-lightning-4step model is capable of generating a wide variety of images based on text prompts, from realistic scenes to imaginative and creative compositions. The model's 4-step generation process allows it to produce high-quality results quickly, making it suitable for applications that require fast image generation. What can I use it for? The sdxl-lightning-4step model could be useful for applications that need to generate images in real-time, such as video game asset generation, interactive storytelling, or augmented reality experiences. Businesses could also use the model to quickly generate product visualization, marketing imagery, or custom artwork based on client prompts. Creatives may find the model helpful for ideation, concept development, or rapid prototyping. Things to try One interesting thing to try with the sdxl-lightning-4step model is to experiment with the guidance scale parameter. By adjusting the guidance scale, you can control the balance between fidelity to the prompt and diversity of the output. Lower guidance scales may result in more unexpected and imaginative images, while higher scales will produce outputs that are closer to the specified prompt.

Updated Invalid Date

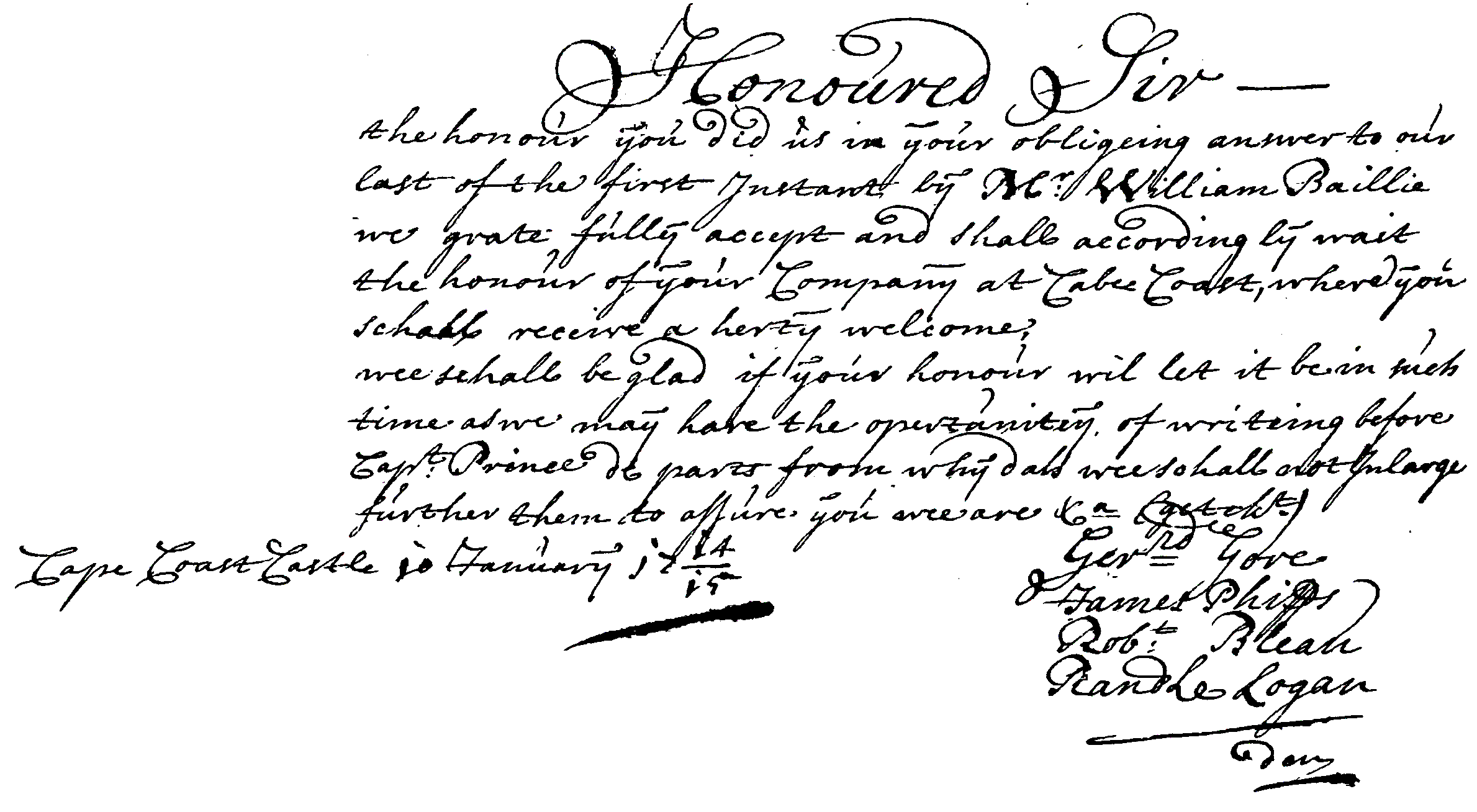

docentr

3

The docentr model is an end-to-end document image enhancement transformer developed by cjwbw. It is a PyTorch implementation of the paper "DocEnTr: An End-to-End Document Image Enhancement Transformer" and is built on top of the vit-pytorch vision transformers library. The model is designed to enhance and binarize degraded document images, as demonstrated in the provided examples. Model inputs and outputs The docentr model takes an image as input and produces an enhanced, binarized output image. The input image can be a degraded or low-quality document, and the model aims to improve its visual quality by performing tasks such as binarization, noise removal, and contrast enhancement. Inputs image**: The input image, which should be in a valid image format (e.g., PNG, JPEG). Outputs Output**: The enhanced, binarized output image. Capabilities The docentr model is capable of performing end-to-end document image enhancement, including binarization, noise removal, and contrast improvement. It can be used to improve the visual quality of degraded or low-quality document images, making them more readable and easier to process. The model has shown promising results on benchmark datasets such as DIBCO, H-DIBCO, and PALM. What can I use it for? The docentr model can be useful for a variety of applications that involve processing and analyzing document images, such as optical character recognition (OCR), document archiving, and image-based document retrieval. By enhancing the quality of the input images, the model can help improve the accuracy and reliability of downstream tasks. Additionally, the model's capabilities can be leveraged in projects related to document digitization, historical document restoration, and automated document processing workflows. Things to try You can experiment with the docentr model by testing it on your own degraded document images and observing the binarization and enhancement results. The model is also available as a pre-trained Replicate model, which you can use to quickly apply the image enhancement without training the model yourself. Additionally, you can explore the provided demo notebook to gain a better understanding of how to use the model and customize its configurations.

Updated Invalid Date