instruct-pix2pix

Maintainer: timothybrooks

819

| Property | Value |

|---|---|

| Run this model | Run on Replicate |

| API spec | View on Replicate |

| Github link | View on Github |

| Paper link | View on Arxiv |

Create account to get full access

Model overview

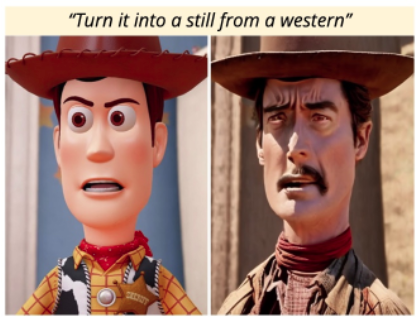

instruct-pix2pix is a powerful image editing model that allows users to edit images based on natural language instructions. Developed by Timothy Brooks, this model is similar to other instructable AI models like InstructPix2Pix and InstructIR, which enable image editing and restoration through textual guidance. It can be considered an extension of the widely-used Stable Diffusion model, adding the ability to edit existing images rather than generating new ones from scratch.

Model inputs and outputs

The instruct-pix2pix model takes in an image and a textual prompt as inputs, and outputs a new edited image based on the provided instructions. The model is designed to be versatile, allowing users to guide the image editing process through natural language commands.

Inputs

- Image: An existing image that will be edited according to the provided prompt

- Prompt: A textual description of the desired edits to be made to the input image

Outputs

- Edited Image: The resulting image after applying the specified edits to the input image

Capabilities

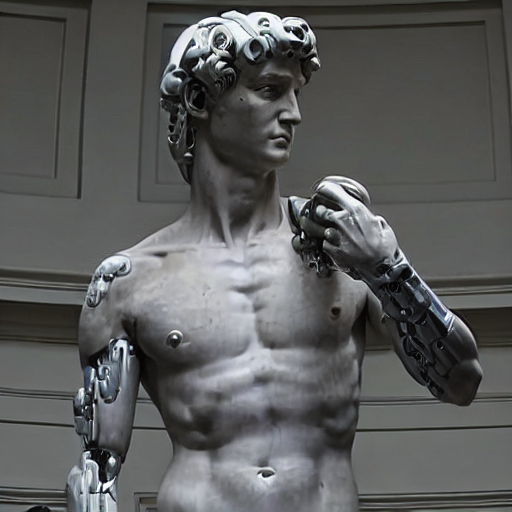

The instruct-pix2pix model excels at a wide range of image editing tasks, from simple modifications like changing the color scheme or adding visual elements, to more complex transformations like turning a person into a cyborg or altering the composition of a scene. The model's ability to understand and interpret natural language instructions allows for a highly intuitive and flexible editing experience.

What can I use it for?

The instruct-pix2pix model can be utilized in a variety of applications, such as photo editing, digital art creation, and even product visualization. For example, a designer could use the model to quickly experiment with different design ideas by providing textual prompts, or a marketer could create custom product images for their e-commerce platform by instructing the model to make specific changes to stock photos.

Things to try

One interesting aspect of the instruct-pix2pix model is its potential for creative and unexpected image transformations. Users could try providing prompts that push the boundaries of what the model is capable of, such as combining different artistic styles, merging multiple objects or characters, or exploring surreal and fantastical imagery. The model's versatility and natural language understanding make it a compelling tool for those seeking to unleash their creativity through image editing.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

instruct-pix2pix

39

instruct-pix2pix is a versatile AI model that allows users to edit images by providing natural language instructions. It is similar to other image-to-image translation models like instructir and deoldify_image, which can perform tasks like face restoration and colorization. However, instruct-pix2pix stands out by allowing users to control the edits through free-form textual instructions, rather than relying solely on predefined editing operations. Model inputs and outputs instruct-pix2pix takes an input image and a natural language instruction as inputs, and produces an edited image as output. The model is trained to understand a wide range of editing instructions, from simple changes like "turn him into a cyborg" to more complex transformations. Inputs Input Image**: The image you want to edit Instruction Text**: The natural language instruction describing the desired edit Outputs Output Image**: The edited image, following the provided instruction Capabilities instruct-pix2pix can perform a diverse range of image editing tasks, from simple modifications like changing an object's appearance, to more complex operations like adding or removing elements from a scene. The model is able to understand and faithfully execute a wide variety of instructions, allowing users to be highly creative and expressive in their edits. What can I use it for? instruct-pix2pix can be a powerful tool for creative projects, product design, and content creation. For example, you could use it to quickly mock up different design concepts, experiment with character designs, or generate visuals to accompany creative writing. The model's flexibility and ease of use make it an attractive option for a wide range of applications. Things to try One interesting aspect of instruct-pix2pix is its ability to preserve details from the original image while still making significant changes based on the provided instruction. Try experimenting with different levels of the "Cfg Text" and "Cfg Image" parameters to find the right balance between preserving the source image and following the editing instruction. You can also try different phrasing of the instructions to see how the model's interpretation and output changes.

Updated Invalid Date

✅

instruct-pix2pix

860

instruct-pix2pix is a text-to-image model developed by Tim Brooks that can generate images based on natural language instructions. It builds upon the InstructPix2Pix paper, which introduced the concept of "instruction tuning" to enable vision-language models to better follow image editing instructions. Unlike previous text-to-image models, instruct-pix2pix focuses on generating images that adhere to specific textual instructions, making it well-suited for applications that require controlled image generation. Similar models like cartoonizer and stable-diffusion-xl-1.0-inpainting-0.1 also leverage instruction tuning to enable more precise control over image generation, but they focus on different tasks like cartoonization and inpainting, respectively. In contrast, instruct-pix2pix is designed for general-purpose image generation guided by textual instructions. Model inputs and outputs Inputs Prompt**: A natural language description of the desired image, such as "turn him into cyborg". Image**: An optional input image that the model can use as a starting point for generating the final image. Outputs Generated Image**: The model outputs a new image that adheres to the provided instructions, either by modifying the input image or generating a new image from scratch. Capabilities The instruct-pix2pix model excels at generating images that closely match textual instructions. For example, you can use it to transform an existing image into a new one with specific desired characteristics, like "turn him into a cyborg". The model is able to understand the semantic meaning of the instruction and generate an appropriate image in response. What can I use it for? instruct-pix2pix could be useful for a variety of applications that require controlled image generation, such as: Creative tools**: Allowing artists and designers to quickly generate images that match their creative vision, streamlining the ideation and prototyping process. Educational applications**: Helping students or hobbyists create custom illustrations to accompany their written work or presentations. Assistive technology**: Enabling individuals with disabilities or limited artistic skills to generate images to support their needs or express their ideas. Things to try One interesting aspect of instruct-pix2pix is its ability to generate images that adhere to specific instructions, even when starting with an existing image. This could be useful for tasks like image editing, where you might want to transform an image in a controlled way based on textual guidance. For example, you could try using the model to modify an existing portrait by instructing it to "turn the subject into a cyborg" or "make the background more futuristic".

Updated Invalid Date

sdxl-lightning-4step

412.2K

sdxl-lightning-4step is a fast text-to-image model developed by ByteDance that can generate high-quality images in just 4 steps. It is similar to other fast diffusion models like AnimateDiff-Lightning and Instant-ID MultiControlNet, which also aim to speed up the image generation process. Unlike the original Stable Diffusion model, these fast models sacrifice some flexibility and control to achieve faster generation times. Model inputs and outputs The sdxl-lightning-4step model takes in a text prompt and various parameters to control the output image, such as the width, height, number of images, and guidance scale. The model can output up to 4 images at a time, with a recommended image size of 1024x1024 or 1280x1280 pixels. Inputs Prompt**: The text prompt describing the desired image Negative prompt**: A prompt that describes what the model should not generate Width**: The width of the output image Height**: The height of the output image Num outputs**: The number of images to generate (up to 4) Scheduler**: The algorithm used to sample the latent space Guidance scale**: The scale for classifier-free guidance, which controls the trade-off between fidelity to the prompt and sample diversity Num inference steps**: The number of denoising steps, with 4 recommended for best results Seed**: A random seed to control the output image Outputs Image(s)**: One or more images generated based on the input prompt and parameters Capabilities The sdxl-lightning-4step model is capable of generating a wide variety of images based on text prompts, from realistic scenes to imaginative and creative compositions. The model's 4-step generation process allows it to produce high-quality results quickly, making it suitable for applications that require fast image generation. What can I use it for? The sdxl-lightning-4step model could be useful for applications that need to generate images in real-time, such as video game asset generation, interactive storytelling, or augmented reality experiences. Businesses could also use the model to quickly generate product visualization, marketing imagery, or custom artwork based on client prompts. Creatives may find the model helpful for ideation, concept development, or rapid prototyping. Things to try One interesting thing to try with the sdxl-lightning-4step model is to experiment with the guidance scale parameter. By adjusting the guidance scale, you can control the balance between fidelity to the prompt and diversity of the output. Lower guidance scales may result in more unexpected and imaginative images, while higher scales will produce outputs that are closer to the specified prompt.

Updated Invalid Date

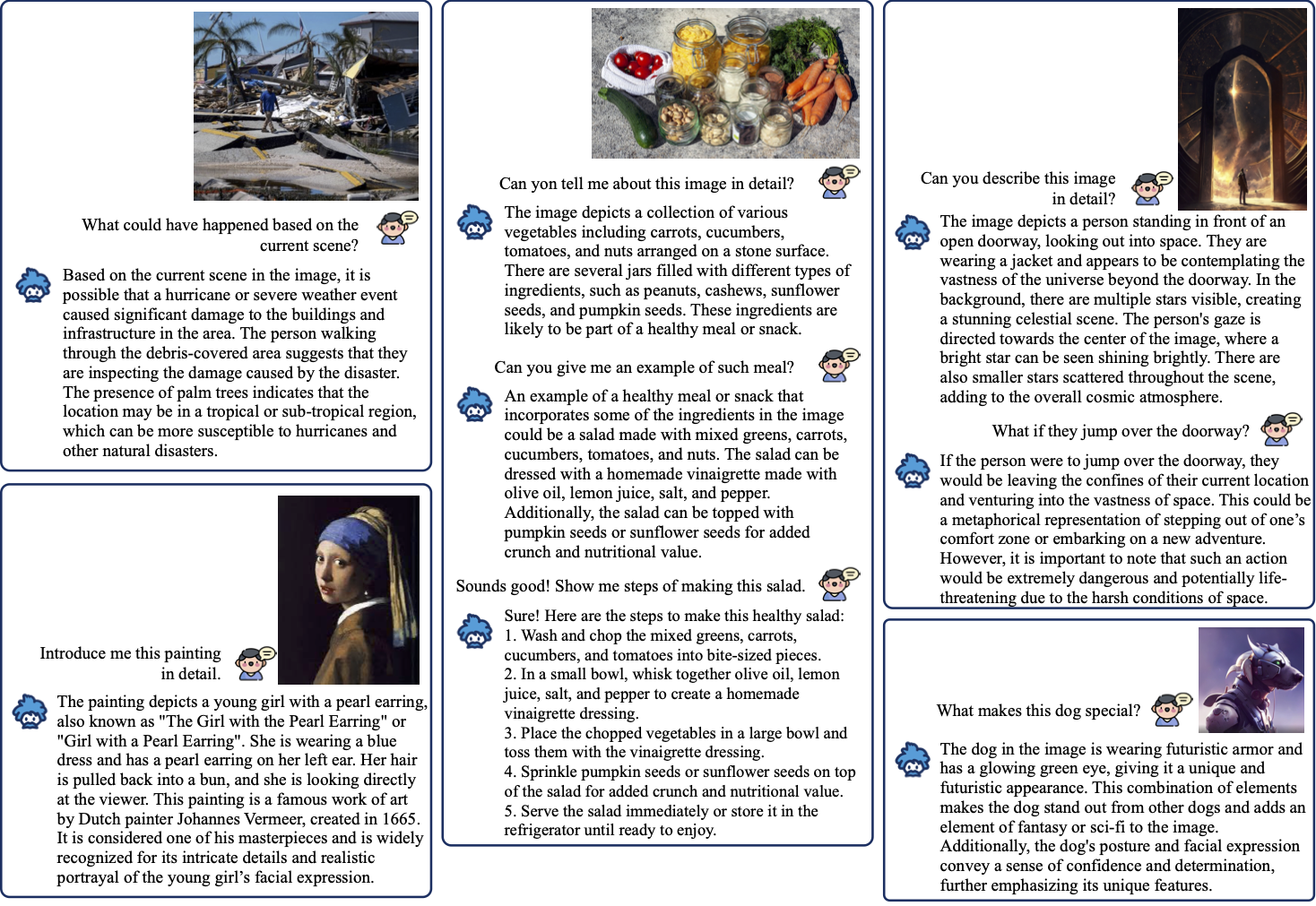

instructblip

537

InstructBLIP is an image captioning model that leverages vision-language models with instruction tuning. It builds upon the BLIP model, which is a bootstrapping language-image pre-training approach. InstructBLIP aims to be a more general-purpose vision-language model by incorporating instruction tuning, which allows it to better understand and follow natural language instructions. This model can be contrasted with other multi-modal models like LLAVA-13B and Stable Diffusion, which have different focuses on visual instruction tuning and text-to-image generation respectively. Model inputs and outputs InstructBLIP takes an image as input and generates a text description of that image. The key inputs are the image path, a prompt to guide the caption, and various parameters to control the output length, sampling, and penalties. The model outputs a text string containing the generated caption. Inputs Image Path**: The path to the image to be captioned Prompt**: The natural language prompt to guide the caption generation Max Len**: The maximum length of the generated caption Min Len**: The minimum length of the generated caption Beam Size**: The number of candidate captions to consider Len Penalty**: A penalty factor applied to the length of the generated caption Repetition Penalty**: A penalty factor applied to repeated tokens in the generated caption Top P**: The top-p nucleus sampling parameter to control the randomness of the output Use Nucleus Sampling**: A boolean to enable or disable the use of nucleus sampling Outputs Output**: The generated text caption for the input image Capabilities InstructBLIP is capable of generating human-like image captions that are tailored to the provided prompt. It can understand and follow natural language instructions to produce captions that are relevant and contextual. The model has been trained on a large dataset of image-text pairs, giving it a broad knowledge base to draw from. What can I use it for? You can use InstructBLIP for a variety of applications that require generating textual descriptions of images, such as: Automating the captioning of images in a content management system or e-commerce platform Enhancing accessibility by providing alt-text descriptions for images Generating captions for social media posts or marketing materials Powering image-based search or retrieval systems The instruction tuning capabilities of InstructBLIP also make it well-suited for more specialized tasks, such as generating captions for medical images or providing detailed technical descriptions of engineering diagrams. Things to try One interesting aspect of InstructBLIP is its ability to generate captions that adhere to specific instructions or constraints. For example, you could try providing prompts that ask the model to describe the image from a particular perspective (e.g., "Describe the scene as if you were a young child looking at the image") or to focus on certain visual elements (e.g., "Describe the colors and textures in the image"). Experimenting with different prompts and parameters can help you uncover the model's versatility and discover new ways to leverage its capabilities.

Updated Invalid Date