paella_fast_outpainting

Maintainer: arielreplicate

5

| Property | Value |

|---|---|

| Run this model | Run on Replicate |

| API spec | View on Replicate |

| Github link | View on Github |

| Paper link | View on Arxiv |

Create account to get full access

Model overview

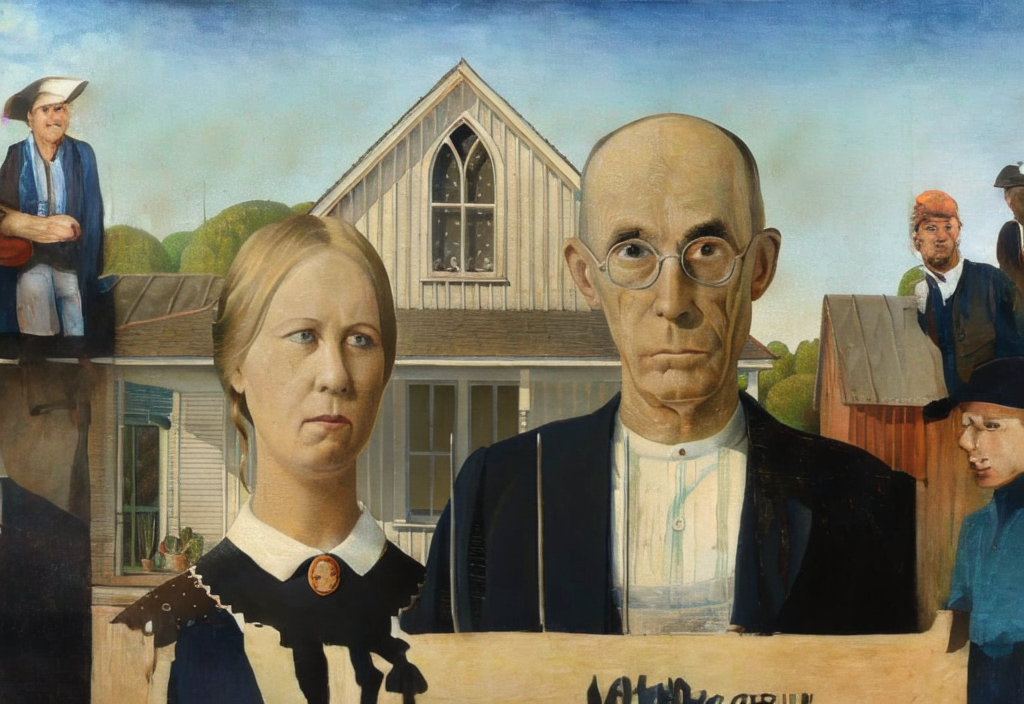

paella_fast_outpainting is a fast image outpainting model developed by arielreplicate. This model is similar to other outpainting models like sdxl-outpainting-lora, which uses PatchMatch to improve mask quality, and realisticoutpainter, which combines Stable Diffusion and ControlNet for outpainting. paella_fast_outpainting aims to provide a fast and efficient outpainting solution.

Model inputs and outputs

paella_fast_outpainting takes an input image, a prompt, and the relative location and size of the output image. It then generates an expanded version of the input image based on the provided parameters.

Inputs

- Prompt: A text description to guide the outpainting process

- Input Image: The image to be expanded

- Input Location: The relative location of the input image on the canvas (e.g.,

0.5,0.5for the center) - Output Relative Size: The desired size of the output image relative to the input (e.g.,

1.5,1.5for 1.5 times larger)

Outputs

- Output Images: An array of expanded images based on the input parameters

Capabilities

paella_fast_outpainting is capable of quickly generating expanded versions of input images. This can be useful for tasks like creating panoramic images, extending the canvas of artwork, or generating larger versions of photos.

What can I use it for?

paella_fast_outpainting can be used for a variety of creative and practical applications, such as:

- Expanding landscape or cityscape photos to create panoramic images

- Extending the canvas of digital paintings or illustrations

- Generating larger versions of product photos or other images for use in marketing or e-commerce

- Experimenting with different outpainting techniques and exploring the capabilities of AI-generated content

Things to try

Try experimenting with different input prompts, locations, and relative sizes to see how the model generates expanded images. You could also try combining paella_fast_outpainting with other models like deoldify_video to colorize and expand old footage, or realistic-vision-v5-inpainting to inpaint and outpaint images in a seamless way.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

deoldify_image

398

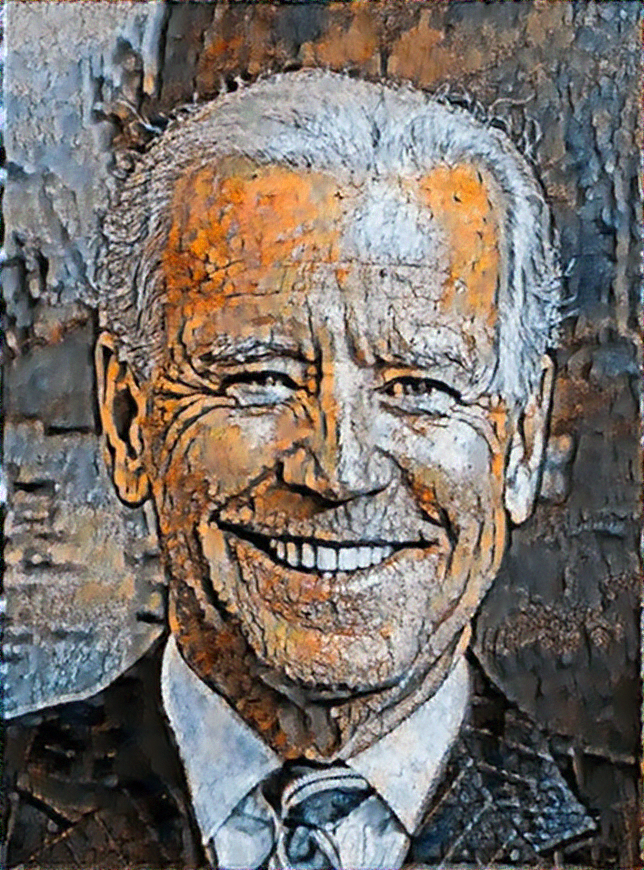

The deoldify_image model from maintainer arielreplicate is a deep learning-based AI model that can add color to old black-and-white images. It builds upon techniques like Self-Attention Generative Adversarial Network and Two Time-Scale Update Rule, and introduces a novel "NoGAN" training approach to achieve high-quality, stable colorization results. The model is part of the DeOldify project, which aims to colorize and restore old images and film footage. It offers three variants - "Artistic", "Stable", and "Video" - each optimized for different use cases. The Artistic model produces the most vibrant colors but may leave important parts of the image gray, while the Stable model is better suited for natural scenes and less prone to leaving gray human parts. The Video model is optimized for smooth, consistent and flicker-free video colorization. Model inputs and outputs Inputs model_name**: Specifies which model to use - "Artistic", "Stable", or "Video" input_image**: The path to the black-and-white image to be colorized render_factor**: Determines the resolution at which the color portion of the image is rendered. Lower values render faster but may result in less vibrant colors, while higher values can produce more detailed results but may wash out the colors. Outputs The colorized version of the input image, returned as a URI. Capabilities The deoldify_image model can produce high-quality, realistic colorization of old black-and-white images, with impressive results on a wide range of subjects like historical photos, portraits, landscapes, and even old film footage. The use of the "NoGAN" training approach helps to eliminate common issues like flickering, glitches, and inconsistent coloring that plagued earlier colorization models. What can I use it for? The deoldify_image model can be a powerful tool for breathtaking photo restoration and enhancement projects. It could be used to bring historical images to life, add visual interest to old family photos, or even breathe new life into classic black-and-white films. Potential applications include historical archives, photo sharing services, film restoration, and more. Things to try One interesting aspect of the deoldify_image model is that it seems to have learned some underlying "rules" about color based on subtle cues in the black-and-white images, resulting in remarkably consistent and deterministic colorization decisions. This means the model can produce very stable, flicker-free results even when coloring moving scenes in video. Experimenting with different input images, especially ones with unique or challenging elements, could yield fascinating insights into the model's inner workings.

Updated Invalid Date

instruct-pix2pix

39

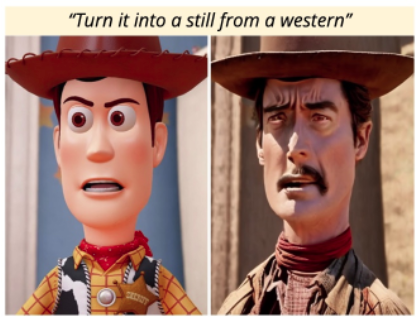

instruct-pix2pix is a versatile AI model that allows users to edit images by providing natural language instructions. It is similar to other image-to-image translation models like instructir and deoldify_image, which can perform tasks like face restoration and colorization. However, instruct-pix2pix stands out by allowing users to control the edits through free-form textual instructions, rather than relying solely on predefined editing operations. Model inputs and outputs instruct-pix2pix takes an input image and a natural language instruction as inputs, and produces an edited image as output. The model is trained to understand a wide range of editing instructions, from simple changes like "turn him into a cyborg" to more complex transformations. Inputs Input Image**: The image you want to edit Instruction Text**: The natural language instruction describing the desired edit Outputs Output Image**: The edited image, following the provided instruction Capabilities instruct-pix2pix can perform a diverse range of image editing tasks, from simple modifications like changing an object's appearance, to more complex operations like adding or removing elements from a scene. The model is able to understand and faithfully execute a wide variety of instructions, allowing users to be highly creative and expressive in their edits. What can I use it for? instruct-pix2pix can be a powerful tool for creative projects, product design, and content creation. For example, you could use it to quickly mock up different design concepts, experiment with character designs, or generate visuals to accompany creative writing. The model's flexibility and ease of use make it an attractive option for a wide range of applications. Things to try One interesting aspect of instruct-pix2pix is its ability to preserve details from the original image while still making significant changes based on the provided instruction. Try experimenting with different levels of the "Cfg Text" and "Cfg Image" parameters to find the right balance between preserving the source image and following the editing instruction. You can also try different phrasing of the instructions to see how the model's interpretation and output changes.

Updated Invalid Date

gpdm

5

The gpdm model, developed by Ariel Elnekave and Yair Weiss, is a novel image generation algorithm that generates natural images by directly matching the patch distribution of a reference image. This approach differs from traditional GAN-based models like Stable Diffusion which generate images from scratch. Instead, gpdm manipulates and reshuffles patches from the reference image to create new, photorealistic outputs. Model inputs and outputs The gpdm model takes in a reference image and generates new images that match the statistical properties of the input. The model supports several tasks, including image reshuffling, retargeting, style transfer, and texture synthesis. Depending on the task, the model accepts additional parameters like content images, width/height factors, and the number of outputs to generate. Inputs Reference Image**: The main input image used as a reference for the generation process. Content Image**: Only required for the style transfer task, this is the image whose content will be used. Width/Height Factor**: Controls the aspect ratio of the output image for the retargeting task. Num Outputs**: Specifies how many output images to generate, which can improve quality and diversity. Outputs Generated Images**: The model outputs one or more new images that match the statistical properties of the reference image, as specified by the input parameters. Capabilities The gpdm model is capable of generating diverse, photorealistic images by directly matching the patch distribution of a reference image. This approach allows the model to capture the intricate structures and patterns present in natural images, resulting in outputs that are more faithful to the original than traditional GAN-based models. What can I use it for? The gpdm model has a wide range of potential use cases, from creative image editing to data augmentation. For example, you could use it to generate variations of a single image for a design project, or to create a diverse dataset of images for training other machine learning models. The model's ability to perform tasks like style transfer and texture synthesis also makes it a useful tool for artists and designers. Things to try One particularly interesting aspect of the gpdm model is its ability to perform image retargeting, where it can generate a new version of the reference image with a different aspect ratio. This could be useful for adapting images to different display sizes or aspect ratios, without losing the essential characteristics of the original. Another intriguing use case is the model's potential for image inpainting and completion. By manipulating the patch distribution, it may be possible to fill in missing regions of an image or repair damaged areas, while preserving the overall visual coherence.

Updated Invalid Date

deoldify_video

4

The deoldify_video model is a deep learning-based video colorization model developed by Ariel Replicate, the maintainer of this project. It builds upon the open-source DeOldify project, which aims to colorize and restore old images and film footage. The deoldify_video model is specifically optimized for stable, consistent, and flicker-free video colorization. The deoldify_video model is one of three DeOldify models available, along with the "artistic" and "stable" image colorization models. Each model has its own strengths and use cases - the video model prioritizes stability and consistency over maximum vibrance, making it well-suited for colorizing old film footage. Model inputs and outputs Inputs input_video**: The path to a video file to be colorized. render_factor**: An integer that determines the resolution at which the color portion of the image is rendered. Lower values will render faster but may result in less detailed colorization, while higher values can produce more vibrant colors but take longer to process. Outputs Output**: The path to the colorized video output. Capabilities The deoldify_video model is capable of adding realistic color to old black-and-white video footage while maintaining a high degree of stability and consistency. Unlike the previous version of DeOldify, this model is able to produce colorized videos with minimal flickering or artifacts, making it well-suited for processing historical footage. The model has been trained using a novel "NoGAN" technique, which combines the benefits of Generative Adversarial Network (GAN) training with more conventional methods to achieve high-quality results efficiently. This approach helps to eliminate many of the common issues associated with GAN-based colorization, such as inconsistent coloration and visual artifacts. What can I use it for? The deoldify_video model can be used to breathe new life into old black-and-white films and footage, making them more engaging and accessible to modern audiences. This could be particularly useful for historical documentaries, educational materials, or personal archival projects. By colorizing old video, the deoldify_video model can help preserve and showcase cultural heritage, enabling viewers to better connect with the people and events depicted. The consistent and stable colorization results make it suitable for professional-quality video productions. Things to try One interesting aspect of the DeOldify project is the way the models seem to arrive at consistent colorization decisions, even for seemingly arbitrary details like clothing and special effects. This suggests the models are learning underlying rules about how to colorize based on subtle cues in the black-and-white footage. When using the deoldify_video model, you can experiment with adjusting the render_factor parameter to find the sweet spot between speed and quality for your particular use case. Higher render factors can produce more detailed and vibrant results, but may take longer to process. Additionally, the maintainer notes that using a ResNet101 backbone for the generator network, rather than the smaller ResNet34, can help improve the consistency of skin tones and other key details in the colorized output.

Updated Invalid Date