hierspeechpp

Maintainer: adirik

4

| Property | Value |

|---|---|

| Run this model | Run on Replicate |

| API spec | View on Replicate |

| Github link | View on Github |

| Paper link | View on Arxiv |

Create account to get full access

Model overview

hierspeechpp is a zero-shot speech synthesizer developed by Replicate user adirik. It is a text-to-speech model that can generate speech from text and a target voice, enabling zero-shot speech synthesis. This model is similar to other text-to-speech models like styletts2, voicecraft, and whisperspeech-small, which also focus on generating speech from text or audio.

Model inputs and outputs

hierspeechpp takes in text or audio as input and generates an audio file as output. The model allows you to provide a target voice clip, which it will use to synthesize the output speech. This enables zero-shot speech synthesis, where the model can generate speech in the voice of the target speaker without requiring any additional training data.

Inputs

- input_text: (optional) Text input to the model. If provided, it will be used for the speech content of the output.

- input_sound: (optional) Sound input to the model in .wav format. If provided, it will be used for the speech content of the output.

- target_voice: A voice clip in .wav format containing the speaker to synthesize.

- denoise_ratio: Noise control. 0 means no noise reduction, 1 means maximum noise reduction.

- text_to_vector_temperature: Temperature for text-to-vector model. Larger value corresponds to slightly more random output.

- output_sample_rate: Sample rate of the output audio file.

- scale_output_volume: Scale normalization. If set to true, the output audio will be scaled according to the input sound if provided.

- seed: Random seed to use for reproducibility.

Outputs

- Output: An audio file in .mp3 format containing the synthesized speech.

Capabilities

hierspeechpp can generate high-quality speech by leveraging a target voice clip. It is capable of zero-shot speech synthesis, meaning it can create speech in the voice of the target speaker without any additional training data. This allows for a wide range of applications, such as voice cloning, audiobook narration, and dubbing.

What can I use it for?

You can use hierspeechpp for various speech-related tasks, such as creating custom voice interfaces, generating audio content for podcasts or audiobooks, or even dubbing videos in different languages. The zero-shot nature of the model makes it particularly useful for projects where you need to generate speech in a specific voice without access to a large dataset of that speaker's recordings.

Things to try

One interesting thing to try with hierspeechpp is to experiment with the different input parameters, such as the denoise_ratio and text_to_vector_temperature. By adjusting these settings, you can fine-tune the output to your specific needs, such as reducing background noise or making the speech more natural-sounding. Additionally, you can try using different target voice clips to see how the model adapts to different speakers.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

styletts2

4.2K

styletts2 is a text-to-speech (TTS) model developed by Yinghao Aaron Li, Cong Han, Vinay S. Raghavan, Gavin Mischler, and Nima Mesgarani. It leverages style diffusion and adversarial training with large speech language models (SLMs) to achieve human-level TTS synthesis. Unlike its predecessor, styletts2 models styles as a latent random variable through diffusion models, allowing it to generate the most suitable style for the text without requiring reference speech. It also employs large pre-trained SLMs, such as WavLM, as discriminators with a novel differentiable duration modeling for end-to-end training, resulting in improved speech naturalness. Model inputs and outputs styletts2 takes in text and generates high-quality speech audio. The model inputs and outputs are as follows: Inputs Text**: The text to be converted to speech. Beta**: A parameter that determines the prosody of the generated speech, with lower values sampling style based on previous or reference speech and higher values sampling more from the text. Alpha**: A parameter that determines the timbre of the generated speech, with lower values sampling style based on previous or reference speech and higher values sampling more from the text. Reference**: An optional reference speech audio to copy the style from. Diffusion Steps**: The number of diffusion steps to use in the generation process, with higher values resulting in better quality but longer generation time. Embedding Scale**: A scaling factor for the text embedding, which can be used to produce more pronounced emotion in the generated speech. Outputs Audio**: The generated speech audio in the form of a URI. Capabilities styletts2 is capable of generating human-level TTS synthesis on both single-speaker and multi-speaker datasets. It surpasses human recordings on the LJSpeech dataset and matches human performance on the VCTK dataset. When trained on the LibriTTS dataset, styletts2 also outperforms previous publicly available models for zero-shot speaker adaptation. What can I use it for? styletts2 can be used for a variety of applications that require high-quality text-to-speech generation, such as audiobook production, voice assistants, language learning tools, and more. The ability to control the prosody and timbre of the generated speech, as well as the option to use reference audio, makes styletts2 a versatile tool for creating personalized and expressive speech output. Things to try One interesting aspect of styletts2 is its ability to perform zero-shot speaker adaptation on the LibriTTS dataset. This means that the model can generate speech in the style of speakers it has not been explicitly trained on, by leveraging the diverse speech synthesis offered by the diffusion model. Developers could explore the limits of this zero-shot adaptation and experiment with fine-tuning the model on new speakers to further improve the quality and diversity of the generated speech.

Updated Invalid Date

whisper

30.6K

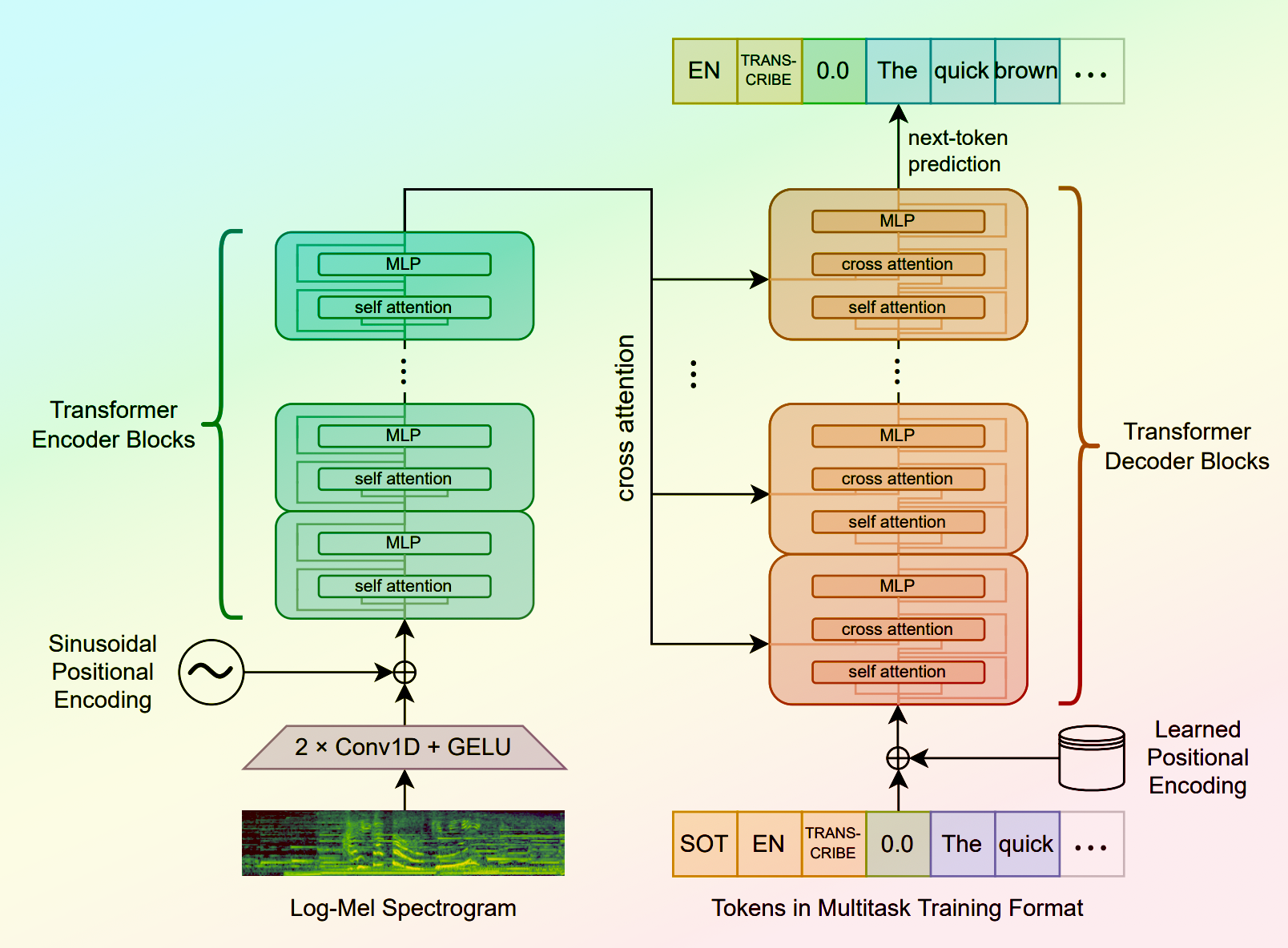

Whisper is a general-purpose speech recognition model developed by OpenAI. It is capable of converting speech in audio to text, with the ability to translate the text to English if desired. Whisper is based on a large Transformer model trained on a diverse dataset of multilingual and multitask speech recognition data. This allows the model to handle a wide range of accents, background noises, and languages. Similar models like whisper-large-v3, incredibly-fast-whisper, and whisper-diarization offer various optimizations and additional features built on top of the core Whisper model. Model inputs and outputs Whisper takes an audio file as input and outputs a text transcription. The model can also translate the transcription to English if desired. The input audio can be in various formats, and the model supports a range of parameters to fine-tune the transcription, such as temperature, patience, and language. Inputs Audio**: The audio file to be transcribed Model**: The specific version of the Whisper model to use, currently only large-v3 is supported Language**: The language spoken in the audio, or None to perform language detection Translate**: A boolean flag to translate the transcription to English Transcription**: The format for the transcription output, such as "plain text" Initial Prompt**: An optional initial text prompt to provide to the model Suppress Tokens**: A list of token IDs to suppress during sampling Logprob Threshold**: The minimum average log probability threshold for a successful transcription No Speech Threshold**: The threshold for considering a segment as silence Condition on Previous Text**: Whether to provide the previous output as a prompt for the next window Compression Ratio Threshold**: The maximum compression ratio threshold for a successful transcription Temperature Increment on Fallback**: The temperature increase when the decoding fails to meet the specified thresholds Outputs Transcription**: The text transcription of the input audio Language**: The detected language of the audio (if language input is None) Tokens**: The token IDs corresponding to the transcription Timestamp**: The start and end timestamps for each word in the transcription Confidence**: The confidence score for each word in the transcription Capabilities Whisper is a powerful speech recognition model that can handle a wide range of accents, background noises, and languages. The model is capable of accurately transcribing audio and optionally translating the transcription to English. This makes Whisper useful for a variety of applications, such as real-time captioning, meeting transcription, and audio-to-text conversion. What can I use it for? Whisper can be used in various applications that require speech-to-text conversion, such as: Captioning and Subtitling**: Automatically generate captions or subtitles for videos, improving accessibility for viewers. Meeting Transcription**: Transcribe audio recordings of meetings, interviews, or conferences for easy review and sharing. Podcast Transcription**: Convert audio podcasts to text, making the content more searchable and accessible. Language Translation**: Transcribe audio in one language and translate the text to another, enabling cross-language communication. Voice Interfaces**: Integrate Whisper into voice-controlled applications, such as virtual assistants or smart home devices. Things to try One interesting aspect of Whisper is its ability to handle a wide range of languages and accents. You can experiment with the model's performance on audio samples in different languages or with various background noises to see how it handles different real-world scenarios. Additionally, you can explore the impact of the different input parameters, such as temperature, patience, and language detection, on the transcription quality and accuracy.

Updated Invalid Date

parler-tts

4.2K

parler-tts is a lightweight text-to-speech (TTS) model developed by cjwbw, a creator at Replicate. It is trained on 10.5K hours of audio data and can generate high-quality, natural-sounding speech with controllable features like gender, background noise, speaking rate, pitch, and reverberation. parler-tts is related to models like voicecraft, whisper, and sabuhi-model, which also focus on speech-related tasks. Additionally, the parler_tts_mini_v0.1 model provides a lightweight version of the parler-tts system. Model inputs and outputs The parler-tts model takes two main inputs: a text prompt and a text description. The prompt is the text to be converted into speech, while the description provides additional details to control the characteristics of the generated audio, such as the speaker's gender, pitch, speaking rate, and environmental factors. Inputs Prompt**: The text to be converted into speech. Description**: A text description that provides details about the desired characteristics of the generated audio, such as the speaker's gender, pitch, speaking rate, and environmental factors. Outputs Audio**: The generated audio file in WAV format, which can be played back or further processed as needed. Capabilities The parler-tts model can generate high-quality, natural-sounding speech with a range of customizable features. Users can control the gender, pitch, speaking rate, and environmental factors of the generated audio by carefully crafting the text description. This allows for a high degree of flexibility and creativity in the generated output, making it useful for a variety of applications, such as audio production, virtual assistants, and language learning. What can I use it for? The parler-tts model can be used in a variety of applications that require text-to-speech functionality. Some potential use cases include: Audio production**: The model can be used to generate natural-sounding voice-overs, narrations, or audio content for videos, podcasts, or other multimedia projects. Virtual assistants**: The model's ability to generate speech with customizable characteristics can be used to create more personalized and engaging virtual assistants. Language learning**: The model can be used to generate sample audio for language learning materials, providing learners with high-quality examples of pronunciation and intonation. Accessibility**: The model can be used to generate audio versions of text content, improving accessibility for individuals with visual impairments or reading difficulties. Things to try One interesting aspect of the parler-tts model is its ability to generate speech with a high degree of control over the output characteristics. Users can experiment with different text descriptions to explore the range of speech styles and environmental factors that the model can produce. For example, try using different descriptors for the speaker's gender, pitch, and speaking rate, or add details about the recording environment, such as the level of background noise or reverberation. By fine-tuning the text description, users can create a wide variety of speech samples that can be used for various applications.

Updated Invalid Date

↗️

whisper

11

Whisper is a state-of-the-art speech recognition model developed by OpenAI. It is capable of transcribing audio into text with high accuracy, making it a valuable tool for a variety of applications. The model is implemented as a Cog model by the maintainer soykertje, allowing it to be easily integrated into various projects. Similar models like Whisper, Whisper Diarization, Whisper Large v3, WhisperSpeech Small, and WhisperX Spanish offer different variations and capabilities, catering to diverse speech recognition needs. Model inputs and outputs The Whisper model takes an audio file as input and generates a text transcription of the speech. The model also supports additional options, such as language specification, translation, and adjusting parameters like temperature and patience for the decoding process. Inputs Audio**: The audio file to be transcribed Model**: The specific Whisper model to use Language**: The language spoken in the audio Translate**: Whether to translate the text to English Transcription**: The format for the transcription (e.g., plain text) Temperature**: The temperature to use for sampling Patience**: The patience value to use in beam decoding Suppress Tokens**: A comma-separated list of token IDs to suppress during sampling Word Timestamps**: Whether to include word-level timestamps in the transcription Logprob Threshold**: The threshold for the average log probability to consider the decoding as successful No Speech Threshold**: The threshold for the probability of the token to consider the segment as silence Condition On Previous Text**: Whether to provide the previous output as a prompt for the next window Compression Ratio Threshold**: The threshold for the gzip compression ratio to consider the decoding as successful Temperature Increment On Fallback**: The temperature increase when falling back due to the above thresholds Outputs The transcribed text, with optional formatting and additional information such as word-level timestamps. Capabilities Whisper is a powerful speech recognition model that can accurately transcribe a wide range of audio content, including interviews, lectures, and spontaneous conversations. The model's ability to handle various accents, background noise, and speaker variations makes it a versatile tool for a variety of applications. What can I use it for? The Whisper model can be utilized in a range of applications, such as: Automated transcription of audio recordings for content creators, journalists, or researchers Real-time captioning for video conferencing or live events Voice-to-text conversion for accessibility purposes or hands-free interaction Language translation services, where the transcribed text can be further translated Developing voice-controlled interfaces or intelligent assistants Things to try Experimenting with the various input parameters of the Whisper model can help fine-tune the transcription quality for specific use cases. For example, adjusting the temperature and patience values can influence the model's sampling behavior, leading to more fluent or more conservative transcriptions. Additionally, leveraging the word-level timestamps can enable synchronized subtitles or captions in multimedia applications.

Updated Invalid Date